Conversational AI in healthcare: maturity model and 7 use cases

Learn how conversational AI in healthcare is driving high-impact use cases for everything from ambient documentation to mental health support.

Imagine a patient calls their doctor's office at midnight with chest pain. Instead of waiting until morning or heading to the emergency room, they speak with an AI system that understands medical terminology, asks the right follow-up questions, and determines whether this needs immediate attention or can wait for a scheduled appointment.

Years ago, we might have labeled that science fiction, but with healthcare AI spending projected to grow rapidly over the next decade, it's actually happening right now in healthcare systems that have moved beyond basic chatbots to deploy truly intelligent conversational AI.

Most healthcare organizations have experimented with conversational AI, but few have moved beyond basic FAQ bots. The difference between organizations stuck in pilot phases and those seeing real clinical and operational results usually comes down to two things: a deliberate, systematic progression — and speech infrastructure built for clinical accuracy. AssemblyAI's Universal-3 Pro with Medical Mode was purpose-built for this — hitting a 4.9% medical entity error rate (vs. 7.3% for Deepgram) at $0.15/hour, roughly 28× cheaper than Amazon Transcribe Medical.

Below, this guide maps out that progression through a practical maturity model and examines real-life applications where conversational AI is delivering measurable results today.

What is conversational AI's role in healthcare?

Conversational AI in healthcare refers to AI models that enable natural, voice-driven interactions between patients, clinicians, and healthcare systems—replacing rigid phone menus and manual documentation with intelligent, context-aware conversations. Instead of pressing 1 for appointments and 2 for prescriptions, patients simply say "I need to reschedule my cardiology appointment"—and the system understands exactly what they mean.

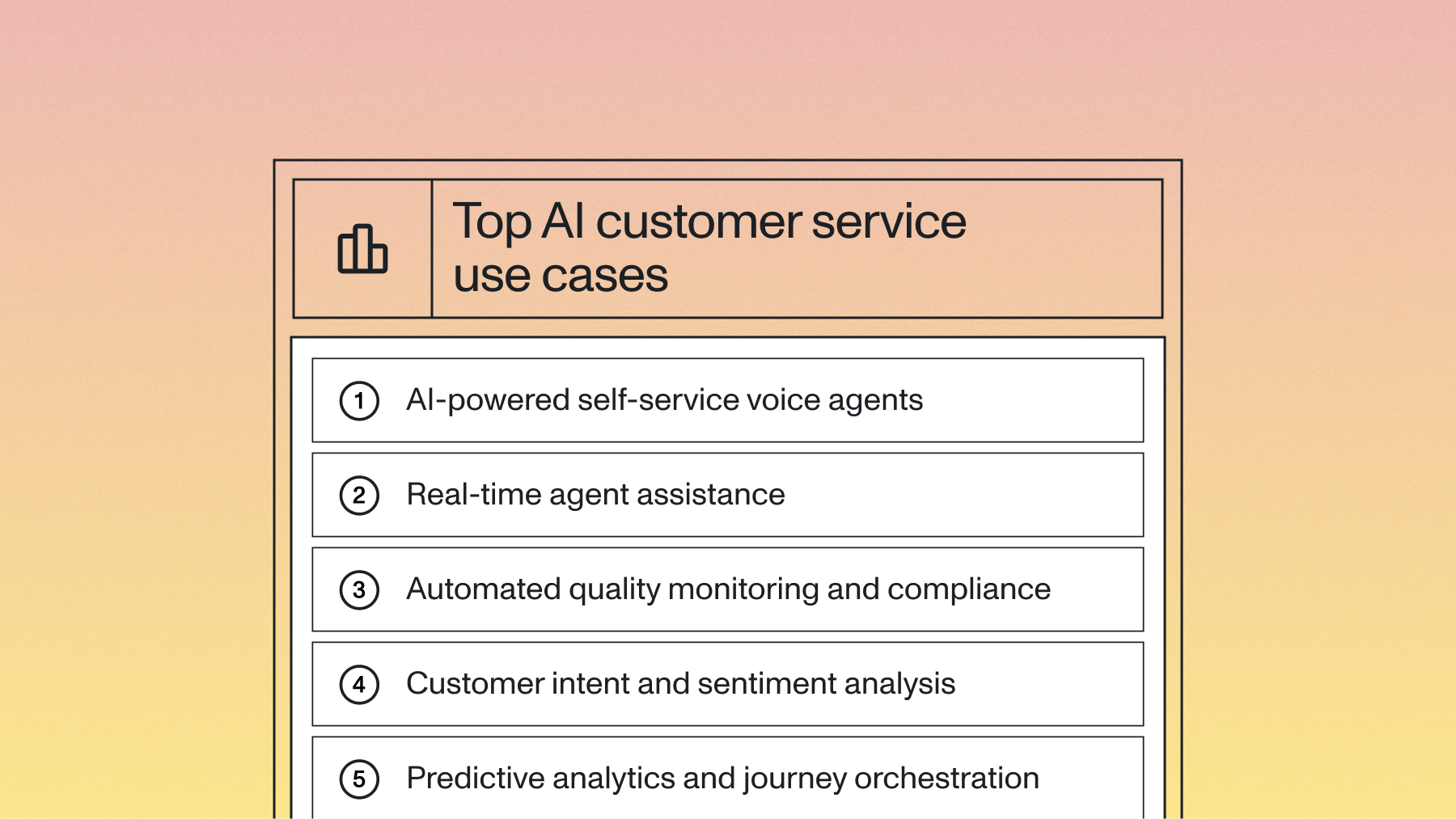

In healthcare specifically, this technology automates three core areas:

- Patient interactions—appointment scheduling, triage, medication reminders, and care follow-up

- Clinical documentation—real-time transcription, ambient note capture, and EHR population

- Administrative workflows—prior authorization, insurance verification, and billing support

Traditional systems force users into rigid menu structures. Conversational AI adapts to natural speech patterns and maintains context throughout complex interactions—while preserving the empathy and clinical nuance that healthcare demands.

Advanced Voice AI vs. basic rule-based systems

The primary difference between advanced Voice AI and basic rule-based systems lies in their ability to handle the unpredictable nature of real-world healthcare conversations.

Traditional healthcare chatbots break down when patients describe symptoms in their own words or when medical discussions involve specialized terminology. Advanced systems powered by medical-grade speech recognition — like Universal-3 Pro with Medical Mode — can accurately capture complex clinical language. That's the difference between a system that understands "atrial fibrillation" versus one that produces gibberish when doctors use proper medical terms.

Speaker diarization solves another critical healthcare challenge: determining who said what in multi-party clinical conversations. Emergency departments and consultation rooms involve multiple voices speaking simultaneously, but advanced systems can attribute each statement correctly to maintain accurate documentation.

LLM integration through platforms like LLM gateway let these systems move beyond simple transcription to actual clinical understanding. They can summarize patient encounters, extract key medical information, and identify potential care coordination needs.

Business benefits and ROI of conversational AI in healthcare

Voice AI isn't just a patient experience upgrade—it's a fundamental shift in healthcare economics. Administrative waste is a massive driver of unnecessary healthcare spending—with one 2019 analysis estimating it comprises up to 15% of national health spending—and conversational AI directly targets it by automating documentation, routing, and patient communication at scale.

The ROI shows up in three ways:

- Time recovered: Clinicians using ambient documentation tools save hours of manual data entry per week—time that goes back to patient care

- Reduced burnout: When documentation is automated, job satisfaction improves and costly staff turnover drops; in one case study, a behavioral health AI scribe reduced documentation time for clinicians by 90%.

- Lower call center overhead: Voice agents handling routine inquiries reduce inbound call volume, freeing staff for complex patient needs

- Dramatically lower infrastructure cost: Medical-grade speech infrastructure priced at $0.15/hour (Universal-3 Pro with Medical Mode) makes it economically viable to transcribe every clinical encounter — a ~28× cost reduction vs. legacy medical transcription APIs at $4.15/hour.

Healthcare organizations are increasingly building ambient clinical documentation workflows on top of advanced speech understanding models, cutting documentation burden without changing how clinicians communicate with patients.

Key business outcomes from healthcare conversational AI

A Voice AI maturity model for conversational AI

Healthcare organizations don't all start from the same place when implementing conversational AI. A rural clinic with basic EHR systems has different challenges than a major academic medical center with dedicated IT teams. That's why we've developed a maturity model that recognizes these differences and provides a simple progression path for any healthcare organization.

Stage 1: Foundational Exploration

Advanced speech technology: Basic speech-to-text

Common applications: Simple voice commands for non-critical systems, isolated medical dictation pilots with general vocabulary.

Stage 2: Integrated & Governed Implementation

Advanced speech technology: Speech-to-text with improved medical vocabulary recognition; Basic Text-to-Speech (TTS) for scripted outputs; Basic Natural Language Understanding (NLU) for intent capture.

Common applications: Integrated clinical dictation directly into EHRs; Voice-based data entry for structured forms (e.g., patient intake); Scripted patient reminders and notifications via voice.

Stage 3: Optimized & Proactive Assistance

Advanced speech technology: High-accuracy speech-to-text for specialized medical terminology & diverse accents (e.g., Universal-3 Pro with Medical Mode); Real-time speaker diarization; Context-aware dialogue management; Natural sounding TTS; Initial secure LLM integration (for Q&A, summarization with human oversight)

Common applications: Ambient clinical documentation (transcribing and attributing speech in multi-party consultations); Voice-based clinical decision support tools; Proactive patient outreach with more personalized and natural-sounding dialogue.

Stage 4: Transformative & Empathetic Engagement

Advanced speech technology: Advanced, adaptive speech-to-text/NLU capable of understanding nuance and sentiment; Empathetic TTS & Speech Synthesis (conveying emotional nuance); Smart and ethically governed LLM integration (for complex reasoning, generation, and nuanced interaction); Voice biometrics for seamless and secure authentication

Common applications: Empathetic virtual health assistants for chronic disease management or mental wellness support; Real-time diagnostic assistance with conversational interaction; Hyper-personalized patient education and engagement.

7 important use cases for conversational AI in healthcare

1. Ambient clinical documentation

Walk into the doctor's office during a typical patient consultation, and you'll notice something different—the doctor is actually looking at the patient instead of typing frantically into a computer screen. That's because an ambient clinical documentation system is quietly listening to their conversation, automatically capturing the medical discussion and turning it into structured clinical notes.

The problem: Physicians spend excessive time on documentation instead of patient care, with manual note-taking interrupting consultations and reducing care quality.

The solution: Real-time transcription with speaker identification and AI summarization automatically generates structured clinical notes from natural doctor-patient conversations.

Ambient clinical documentation changes this completely. Speaker diarization knows whether the doctor, nurse, or patient is speaking—even in busy clinical environments. AI-powered summarization extracts symptoms, diagnoses, and treatment plans, then generates structured notes that integrate directly with EHR systems.

2. Intelligent patient triage

Emergency departments face a never-ending challenge: distinguishing between true emergencies and cases that could be handled in urgent care or primary care settings. Traditional triage relies heavily on nursing staff who may be stretched thin during peak hours, leading to inconsistent assessments and inappropriate patient routing.

The problem: Emergency departments are overwhelmed with non-urgent cases while inconsistent triage protocols and limited nursing staff create bottlenecks in patient assessment.

The solution: Voice-based symptom collection with medical terminology understanding and AI-powered severity assessment automatically routes patients to appropriate care levels.

Intelligent patient triage systems change this dynamic. These AI-powered platforms conduct advanced symptom interviews, use clinical decision support algorithms to evaluate severity levels, and automatically route patients to the most appropriate care setting.

3. Medication management

Medication adherence remains one of healthcare's most persistent challenges. Studies show that up to 50% of patients don't take medications as prescribed—leading to treatment failures, disease progression, and expensive hospital readmissions.

The challenge: Poor medication commitment leads to treatment failures and readmissions, while manual medication reconciliation processes are time-consuming and error-prone.

The AI approach: Voice-activated reminders, conversational adherence monitoring, and automated drug interaction checking create comprehensive medication management support.

Voice-activated reminder systems adapt to individual patient schedules and preferences. These systems have meaningful conversations about how patients are feeling, tracking both compliance and potential side effects or concerns.

4. Mental health support and crisis detection

The mental health provider shortage can't keep up with demand, and recent data shows 160 million Americans live in areas with a shortage of mental health professionals. Crisis situations develop rapidly, but traditional support systems rely on patients to explicitly ask for help—which they often don't.

The gap: Mental health provider shortages limit care access while crisis situations often go undetected until it's too late.

The AI solution: Empathetic conversational support with real-time sentiment analysis and voice pattern monitoring automatically escalates high-risk situations.

Advanced conversational AI provides always-available mental health support that detects crisis signals in real-time. These systems analyze voice patterns, speech cadence, and emotional markers to identify distress—even when a patient hasn't explicitly stated they're struggling.

5. Telehealth upgrades

Telehealth adoption exploded during the pandemic, and while it's still not a perfect alternative, recent HHS data shows that about 22% of adults continue to use telehealth services. Many virtual consultations lack the comprehensive documentation that in-person visits generate, creating gaps in patient records.

The limitations: Telehealth consultations often lack comprehensive documentation while technical barriers prevent many patients from accessing virtual care effectively.

The enhancement: Real-time transcription with automated summarization and voice-based portal access removes technical barriers while ensuring quality documentation and compliance monitoring.

Conversational AI transforms telehealth from a basic video call into a comprehensive clinical encounter. Real-time transcription captures the entire consultation, AI summarization extracts important clinical information for structured documentation, and voice-based patient portals eliminate technology barriers.

6. Insurance and prior authorization

Prior authorization has become healthcare's administrative nightmare. These processes delay patient care by days or weeks while creating massive administrative overhead for providers, and one analysis shows that manual transactions cost nearly double that of electronic ones.

The bottleneck: Prior authorization delays critical patient care while manual claims processing creates errors, denials, and confusion about coverage benefits.

The streamlined approach: Automated prior authorization generation from clinical conversations and voice-based claims processing streamline administrative workflows.

Conversational AI reduces complexity by automating the entire prior authorization workflow. The system listens to clinical conversations, extracts relevant medical justifications, and generates prior authorization requests with proper documentation.

7. Patient education and care instructions

In fact, research shows that patients forget up to 80% of medical information shared during visits almost immediately, and discharge instructions are frequently misunderstood. Language barriers make these problems worse, leaving non-English speakers at risk for poorer outcomes.

The problem: Patients frequently misunderstand discharge instructions and medical information, leading to readmissions and poor outcomes.

The solution: Conversational delivery with interactive Q&A and multi-language support helps patients understand their care instructions.

Conversational AI turns patient education from one-way information delivery into interactive learning experiences. Patients can ask follow-up questions, request clarification, and receive repeated explanations at their own pace.

Implementation strategy for healthcare conversational AI

Deploying Voice AI in a clinical setting requires more than just an API key. It requires a deliberate implementation strategy that accounts for healthcare's unique regulatory requirements and workflow complexities.

Start with a focused, high-impact use case

Don't try to automate the entire patient journey on day one. Pick a specific bottleneck—like post-discharge follow-up calls or ambient clinical documentation—and solve it completely before expanding.

Organizations that succeed typically start with use cases that have:

- Clear success metrics

- Limited integration complexity

- High staff pain points

- Measurable patient impact

Focus on EHR integration

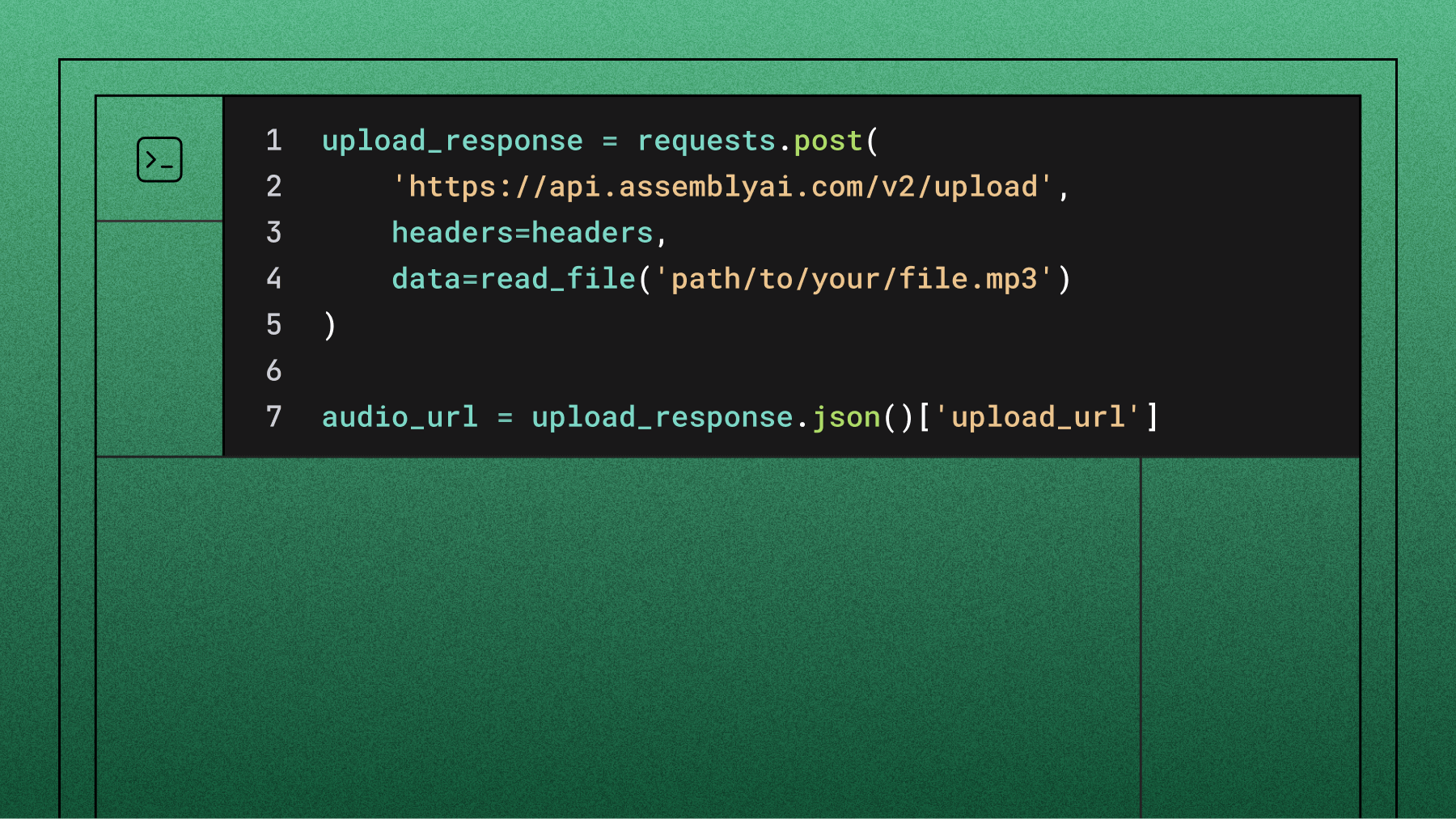

Voice AI must connect seamlessly with your existing EHR systems. Developers register custom tools via JSON Schema, allowing the voice agent to extract relevant medical entities—symptoms, medications, procedure codes—and push structured data directly into the patient record without manual re-entry.

Prioritize clinical staff adoption

Technology that disrupts existing workflows will fail. The goal is invisible infrastructure—AI models that run quietly in the background, capturing source-truth data without requiring doctors to change how they communicate.

Successful implementations follow three principles:

- Involve clinical champions early—frontline buy-in drives adoption faster than any top-down mandate

- Provide adequate training—even simple tools need contextual onboarding for clinical staff

- Iterate on feedback—the best implementations improve continuously based on real workflow observations

Implementation timeline expectations

How AssemblyAI powers conversational AI in healthcare

Healthcare conversational AI is only as good as its foundation: the speech recognition that captures medical conversations accurately. Here's what AssemblyAI brings to clinical environments:

Universal-3 Pro with Medical Mode — purpose-built for clinical speech: Universal-3 Pro is fully promptable and context-aware, and it's ranked #1 on the Hugging Face Open ASR Leaderboard. Medical Mode — available across async and streaming — delivers a 4.9% medical entity error rate, compared to 7.3% for Deepgram, accurately capturing drug names, diagnoses, procedure codes, and domain-specific terminology that general speech recognition models consistently miss. At $0.15 per hour, it's approximately 28× more cost-effective than legacy medical transcription APIs (Amazon Transcribe Medical at ~$4.15/hour), making it economically viable to transcribe every clinical encounter across an organization.

Advanced Speech Understanding: Speaker diarization accurately identifies who's speaking in multi-party clinical conversations — doctor, nurse, patient, family member. Sentiment analysis captures emotional context in patient interactions, while medical entity detection automatically identifies symptoms, diagnoses, medications, and procedures from natural speech.

LLM gateway for healthcare: The LLM gateway framework provides secure integration with advanced language models for medical summarization, clinical Q&A, and care plan generation — without having to stitch together multiple vendors.

Voice Agent API for clinical workflows: Our Voice Agent API (generally available as of April 2026) combines Universal-3 Pro speech understanding with LLM reasoning, TTS voice generation, turn detection, and interruption handling behind a single WebSocket — at a flat $4.50/hour. For developer teams building clinical intake, triage, or follow-up voice agents from scratch, it removes the complexity of stitching together STT, LLM, and TTS providers. Enterprise healthcare organizations that need a fully managed platform with EHR integrations and dedicated support should talk to our sales team for a tailored deployment.

Built for regulated healthcare environments: AssemblyAI is designed to support healthcare organizations operating under strict compliance and data-handling requirements. Talk to our team to discuss your specific compliance needs.

Build conversational AI solutions that work

Conversational AI has moved from experimental technology to essential healthcare infrastructure. These applications show real value across clinical documentation, patient engagement, and administrative workflows.

Generic speech recognition can't handle medical terminology complexity, multi-speaker clinical environments, or the nuanced communication requirements that healthcare demands. The difference between transformational outcomes and disappointing pilots comes down to choosing specialized platforms with proven medical expertise — like Universal-3 Pro with Medical Mode.

See the difference for yourself with AssemblyAI. Get started with free credits to test Universal-3 Pro's medical terminology accuracy and explore what's possible. For a deeper dive on clinical voice AI, check out recordings and materials from our Medical AI meetup in San Francisco (April 2026).

Frequently asked questions about conversational AI in healthcare

What is AssemblyAI's Medical Mode?

Medical Mode is a specialized configuration of Universal-3 Pro — available for both async and streaming transcription — that's tuned for clinical audio. It delivers a 4.9% medical entity error rate (compared to 7.3% for Deepgram) on drug names, diagnoses, procedure codes, and multi-speaker doctor-patient diarization, and it's priced at $0.15 per hour — roughly 28× more cost-effective than Amazon Transcribe Medical. It launched in March 2026 and is purpose-built as a foundation for clinical voice AI applications.

What is the typical ROI timeline for conversational AI in healthcare?

Most healthcare organizations see positive ROI within six to nine months, with the fastest returns coming from administrative automation like voice-based patient intake and clinical documentation—which reduce staff overhead almost immediately.

How does conversational AI integrate with existing EHR systems?

Modern Voice AI integrates with EHRs through secure APIs and tool calling—developers configure voice agents to extract medical entities like symptoms or procedure codes and push structured data directly into the EHR using standard JSON parameters.

What are the compliance requirements for healthcare conversational AI?

Healthcare conversational AI must meet a range of data privacy, security, and patient-protection requirements that vary by region and use case. Organizations evaluating speech infrastructure for clinical use should review their specific regulatory obligations and discuss compliance needs directly with any vendor under consideration.

What's the implementation timeline from pilot to full deployment?

A focused pilot typically deploys in four to six weeks, with full health system deployment taking three to six months depending on EHR integration complexity and the number of clinical workflows being automated.

How do different healthcare settings apply conversational AI differently?

Acute care hospitals typically prioritize ambient documentation and intelligent triage, while outpatient clinics focus on scheduling and medication reminders—and telehealth providers use real-time transcription and voice-based portal access to improve documentation quality and reduce technical barriers for patients.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.