Do I need a custom speech recognition model?

In the field of Automatic Speech Recognition, or ASR, custom models are actually considered an “old school” approach. This is because in the past, classical ASR models had plateaued, so the only option was to try to customize the models in order to increase accuracy.

Custom means better, right? Often. But as AI adoption accelerates—with a recent industry analysis showing that the average number of AI capabilities in an organization has doubled in the past five years—the assumptions around speech recognition models are changing. The idea that custom is superior doesn't hold up anymore; in fact, it's hardly ever the case.

In the field of Automatic Speech Recognition, or ASR, custom models are actually considered an "old school" approach. This is because in the past, classical ASR models had plateaued, so the only option was to try to customize the models in order to increase accuracy.

The modern AI models of today, like the one that powers our speech-to-text API, are nowhere near plateauing, however. Their accuracy keeps improving, if anything.

We'll go further into why this is, as well as other misconceptions many people have about custom models, in this article.

What is custom speech recognition?

A custom speech recognition model is an AI model trained on a specific, narrow dataset to improve transcription accuracy for a particular domain, environment, or vocabulary. The core idea is that by focusing the model on a niche set of audio—like medical dictations, financial earnings calls, or air traffic control commands—it will outperform a general-purpose model trained on a massive, diverse dataset.

This leads to a common misconception: that "custom" always means "better." While that was often true with older speech recognition technology, it's rarely the case with today's advanced AI models. Modern universal models are trained on vast amounts of varied audio, making them incredibly robust and accurate across most use cases right out of the box. For instance, our own model training data for a single model can include 12.5 million hours of multilingual audio.

So, why do people still consider custom models? Usually, it's because they're dealing with highly specialized terminology or unique audio characteristics they believe a general model can't handle.

Are custom models more accurate than general models?

In the field of ASR, custom models are rarely more accurate than the best general models (learn more about one measure of accuracy, Word Error Rate or WER, here). This is because general models are trained on huge datasets, and are constantly maintained and updated using the latest AI research.

For example, at AssemblyAI, we train large AI models on millions of hours of speech data. This training data is a mix of many different types of audio (broadcast TV recordings, phone calls, Zoom meetings, videos, etc), accents, and speakers. This massive amount of diverse training data helps our ASR models to generalize extremely well across all types of audio/data, speakers, recording quality, and accents when converting speech-to-text in the real world.

Custom models usually come into the mix when dealing with audio data that have unique characteristics unseen by a general model. However, because large, accurate general models see most types of audio data during training, there are not many "unique characteristics" that would trip up a general model - or that a custom model would even be able to learn.

For an example of where a custom model would make sense, let's look at children's speech. The actual audio signal of children's speech is extremely unique, and often not included in the typical training datasets of general models due to privacy concerns. For this reason, custom models for children's speech often work better than general models, since children's speech contains "unique characteristics" previously unseen by a general model.

Does custom vocabulary require a custom model?

Not necessarily. Most of the time, what you really need is better proper noun recognition for the custom vocabulary unique to your use case or application.

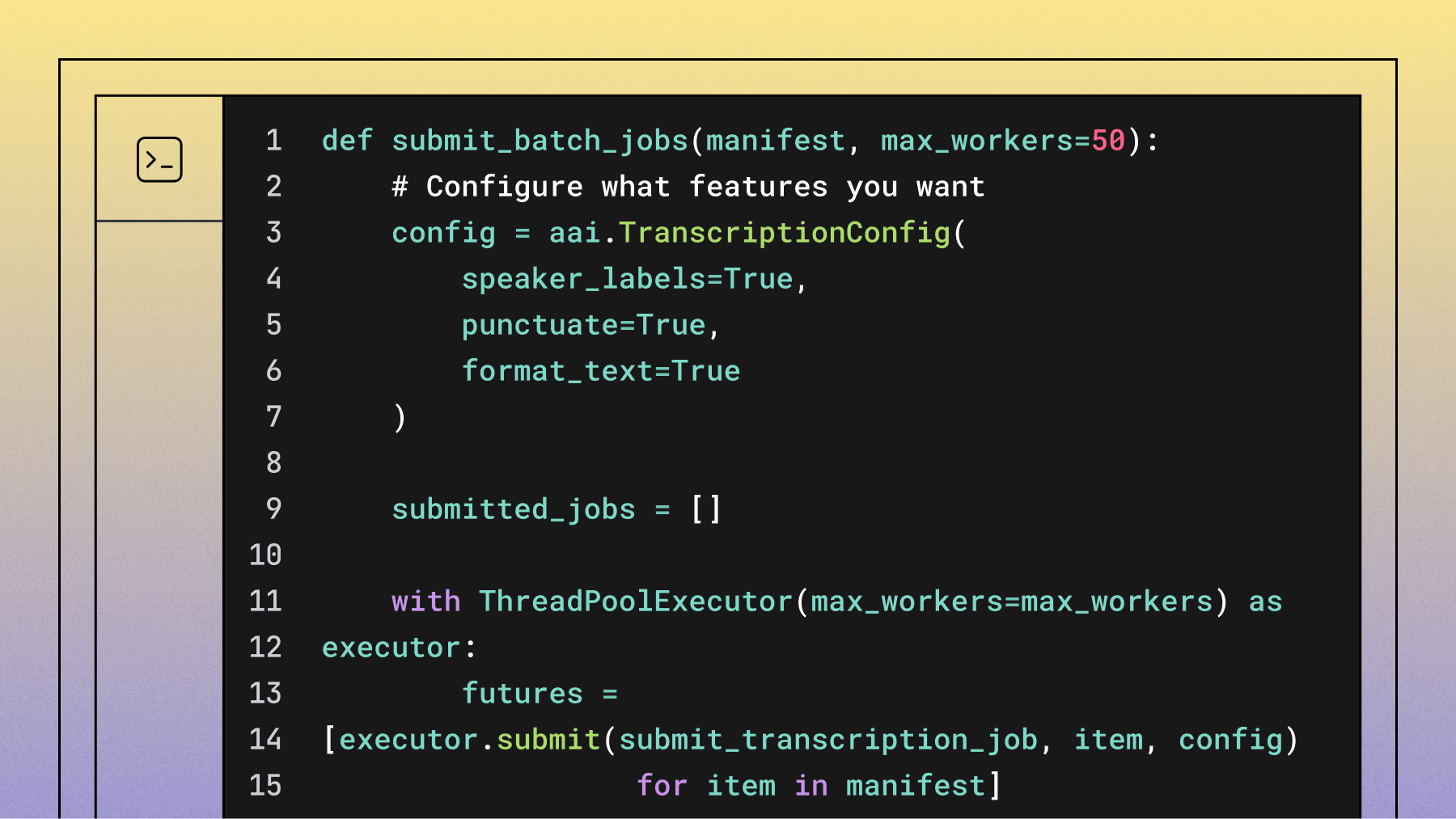

Many modern speech-to-text APIs allow you to dynamically add custom vocabulary to a general model when transcribing audio files. For example, at AssemblyAI, we support custom vocabulary with a feature called Keyterms Prompting. With this feature, you can provide a list of important words or phrases (like industry terms, technical jargon, or proper names) to boost their recognition accuracy during transcription.

With AssemblyAI's Keyterms Prompting, you can specify key words and/or phrases to boost (e.g., "The IQEZ iPhone App").

Let's take a look at how this might look with a brand name like "Budweiser".

Let's say our model predicts "Budweiser" as "Bud wiser". With Keyterms Prompting, you can add "Budweiser" to your list of keyterms to increase the likelihood that the model will accurately predict "Budweiser".

What are the alternatives to custom speech recognition models?

Before committing to the significant time and expense of a custom model, explore these powerful alternatives:

- Keyterms Prompting: Add specific terms to improve recognition of brand names, technical jargon, or proper nouns.

- Prompting: Provide contextual information to the model to guide its transcription for specific domains, like medical or legal terminology.

- API customization: Use built-in features to optimize for your specific audio conditions.

Start with a high-performing universal model and use these features to close any remaining accuracy gaps.

Are custom models easier to maintain?

In reality, custom models are much more expensive and time consuming to both train and maintain. A recent report on building voice agents highlights that hidden costs for a custom model—like system integration, training, and MVP development—can quickly multiply, with a basic agent costing over $100,000 to develop. Here's why:

Building a custom model involves significant upfront costs:

- Data sourcing: You need massive amounts of training data specific to your domain

- Data labeling: Human experts must accurately transcribe and annotate your audio

- Low ROI: Custom models rarely outperform modern general models despite this investment

Ongoing maintenance creates additional challenges:

- Vocabulary updates: Models need regular updates for new terms and evolving language

- Research integration: AI advances move quickly, and custom models often get "frozen in time". Keeping up is a major operational challenge; research on AI scaling shows that leading organizations continuously implement new protocols and tooling to reduce the time-to-impact of new ML use cases from over a year to just a few months.

- Architecture improvements: Without continuous updates, any model becomes obsolete

This is why at AssemblyAI, we push model updates frequently, constantly exploring new AI techniques to improve accuracy. For example, our latest Universal-3 Pro model delivers state-of-the-art accuracy, with significant improvements over previous models. You can review our latest model performance on our benchmarks page.

Should you invest in a custom model?

If you're still considering a custom model, ask yourself these four questions:

- Do you have something very unique (e.g., children's speech) about your data?

- Can you easily obtain large amounts of training data?

- Can you afford large amounts of training data?

- Do you have the ability, or budget, to continually update your model with the latest AI research?

If you answered yes to any of these questions, a custom model could be an option for you. But if you answered no, or are unsure, you'll likely find that a modern, general AI model not only meets your needs but exceeds your expectations. The best way to know for sure is to test it with your own data.

Try our API for free and see how our Universal models perform on your specific use case.

Frequently asked questions about custom speech recognition

What is the difference between ASR and STT?

ASR (Automatic Speech Recognition) refers to the broader technology for converting speech to text, while STT (speech-to-text) often refers to the specific output or function of an ASR system.

Is speech recognition considered AI?

Yes, modern speech recognition uses AI models and deep neural networks trained on vast amounts of audio and text data.

How accurate are modern general speech recognition models?

Leading general speech recognition models achieve near-human accuracy with very low Word Error Rates, making them reliable for most production applications. In contrast, academic literature shows that low-resource systems trained on small datasets can have word error rates as high as 50-80%, highlighting the accuracy gap between general and custom models.

What industries commonly use custom speech models?

Custom models are rare but sometimes used for highly specialized audio like children's speech, military communications, or legacy hardware systems with unique characteristics.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.