customers

All customer stories

Top Voice AI companies are building with Assembly.

resources

Latest Release

Voice Agent API

Voice agents that get it right, respond instantly, and ship the same day with our new Voice Agent API

resources

Get clinical-grade accuracy in far-field, multi-speaker exam rooms and transparent pricing that scales with your growth.

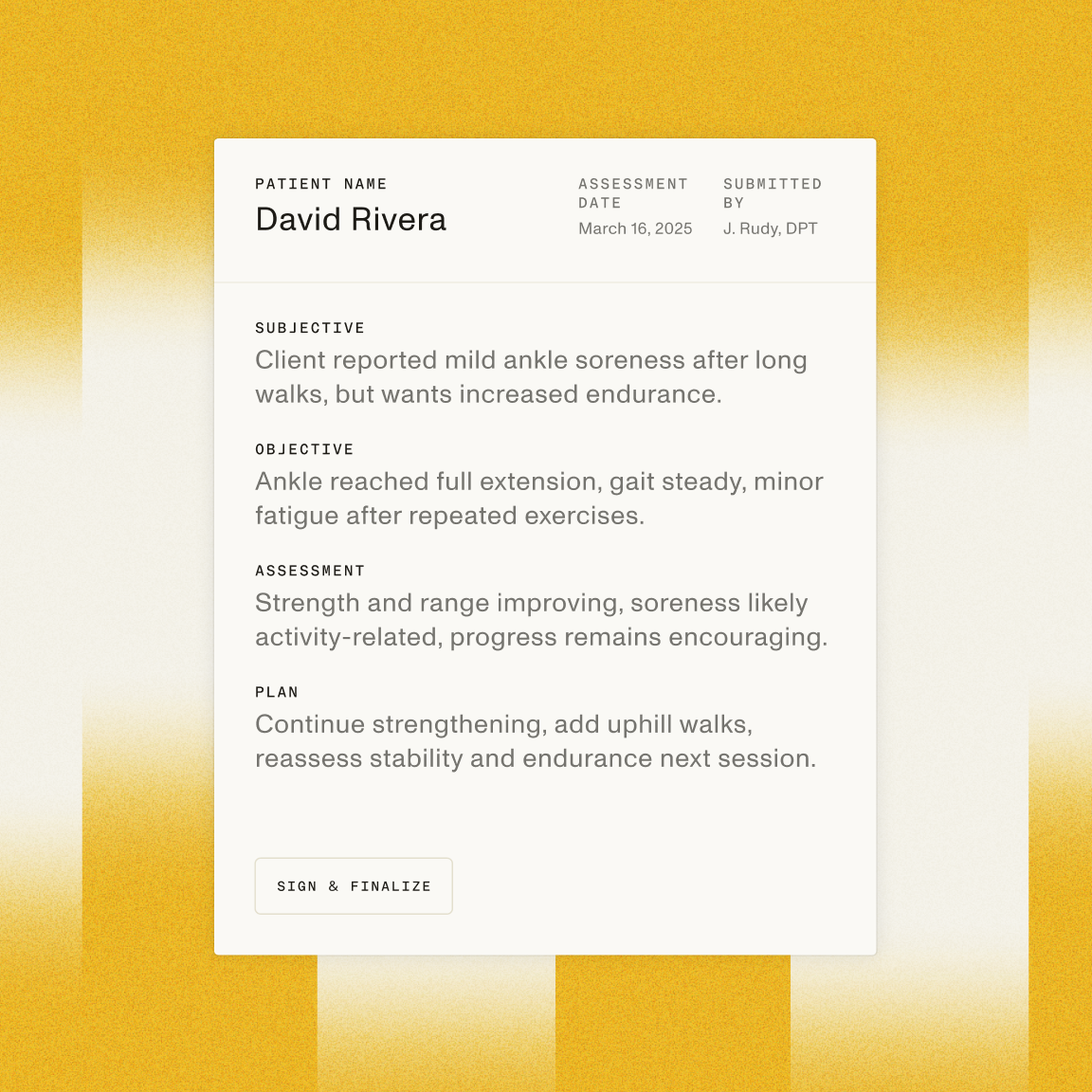

Automate manual processes and speed up routine encounters while extracting actionable insights from every patient interaction

Capture medical conversations from 20+ feet away as providers move, perform procedures, and interact with patients.

Build workflows that are powerful and compliant at a price point that scales.

Build powerful products on models that are engineered for patient interactions and clinical environments.

Learn why today's leading healthcare companies choose AssemblyAI to power their product experiences.

In the medical context, accuracy is highly important….[and] there can be multiple people present. Separating them is key to accuracy. The biggest impact AssemblyAI has had has been in enabling our technical team to focus on workflow-specific features rather than a general speech-to-text pipeline,

Jackson Bierfeldt, Cofounder + CTO, JotPsych

36%

improvement in WER

By leveraging AssemblyAI's accurate transcription capabilities through Dovetail, Careship can truly understand the needs of caregivers and patients, turning qualitative research into the foundation for better healthcare experiences across Europe.

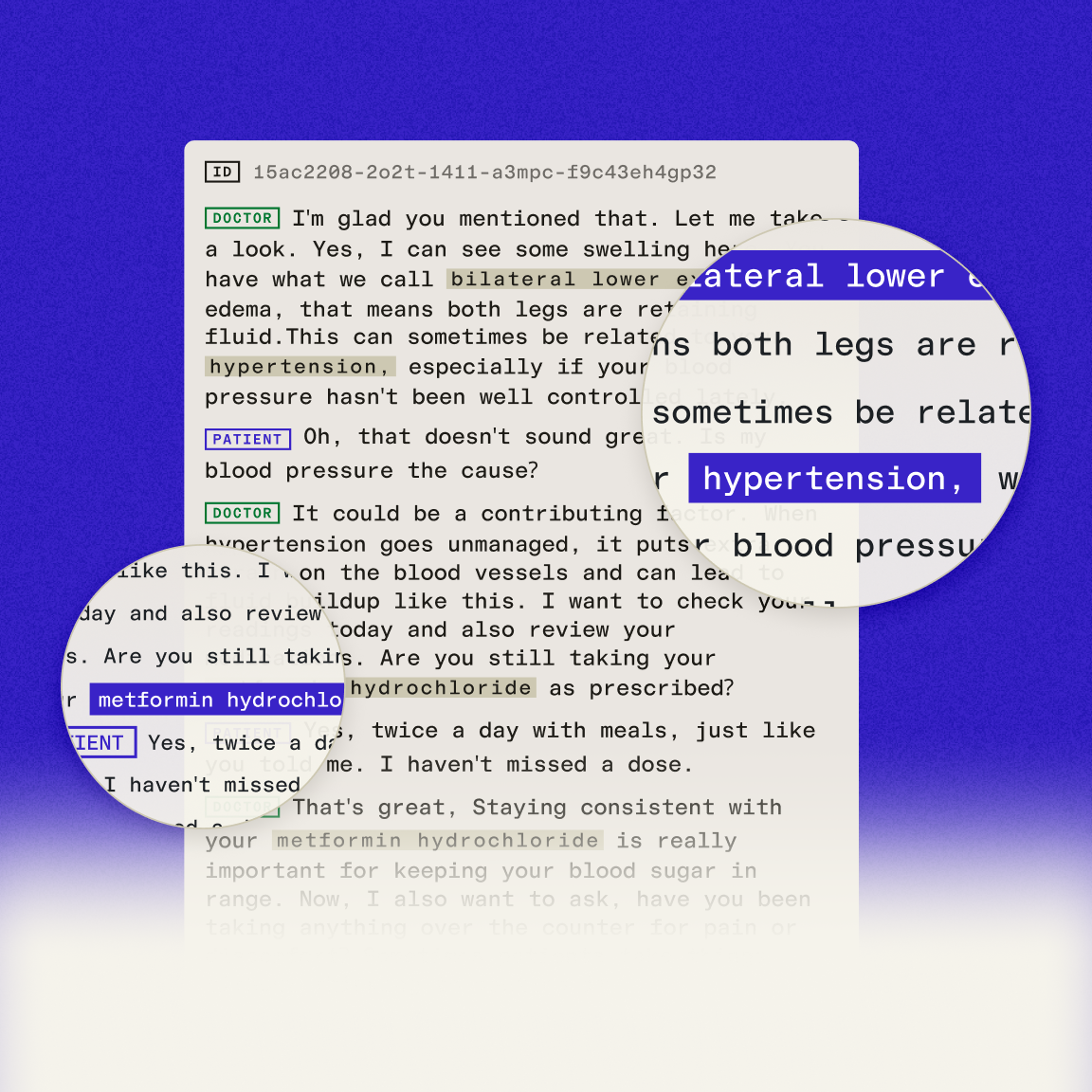

The terms that determine patient outcomes — medication names, dosages, and diagnoses — transcribed more accurately than ever.

Modern tools for superior intelligence

Get insights, industry trends, and breakthroughs on how Voice AI is powering today's provider and patient experiences.