Extract phone call insights with LLMs in Python

Learn how to automatically extract insights from customer calls with Large Language Models (LLMs) and Python.

Learn how to build a complete call analytics pipeline in Python using Large Language Models (LLMs) and AssemblyAI, a critical skill given that a recent industry survey found 76% of companies now embed conversation intelligence in over half of their customer interactions. This tutorial covers transcribing phone calls, separating speakers, and extracting structured insights—like summaries, action items, and contact information—using this sample customer call.

Here's an example of the structured output you'll generate:

SUMMARY:

- The caller is interested in getting an estimate for building a house on a property he is looking to purchase in Westchester.

ACTION ITEMS:

- Have someone call the customer back today to discuss building estimate.

- Set up time for builder to assess potential property site prior to purchase.

CONTACT INFORMATION:

Name: Lindstrom, Kenny

Phone number: 610-265-1715What is call analytics?

Call analytics is the automated process of transcribing phone conversations and applying AI models to extract structured data—summaries, sentiment scores, action items, and contact information—from raw audio. It replaces manual call review—a process where internal analysis shows managers can typically only review about 1-3% of all calls—with a pipeline that runs at scale: audio in, structured business intelligence out.

In practice, this means:

- Automatically identifying call reasons and customer sentiment

- Tracking agent script compliance and performance metrics

- Detecting product issues before they escalate

Types of call analytics

Call analytics generally falls into two categories:

This tutorial focuses on historical analytics using AssemblyAI's async API—ideal for generating comprehensive post-call summaries, extracting CRM data, and analyzing agent performance across thousands of calls.

How call analytics works

Here's the full pipeline, from raw audio to structured insight:

- Audio ingestion: The recorded call is submitted to a speech recognition model

- Transcription: The audio is converted to text using a model like Universal-3 Pro

- Speaker diarization: The transcript is labeled by speaker, distinguishing agent from customer

- LLM analysis: The transcript is sent to an LLM gateway with a structured prompt. This step is becoming standard practice, with recent research showing that over 85% of teams have integrated generative AI models for tasks like summarization and classification.

- Structured output: The LLM returns formatted data ready for your database or CRM

Every downstream insight is only as good as step 2. If the speech-to-text misreads a name or account number, the LLM responds to the wrong input—which is why AssemblyAI's Universal-3 Pro is the transcription model used throughout this tutorial.

Key metrics extracted by call analytics

When building a call analytics pipeline, you can configure your AI models to extract a wide variety of metrics depending on your business needs.

Call summaries and action items

Instead of relying on agents to manually type notes after every call, AI can instantly generate a concise summary of the conversation and a bulleted list of next steps. This saves wrap-up time per interaction, with related case study findings showing that similar AI tools can reduce documentation time by up to 90%.

Sentiment and tone

By analyzing the language used throughout the call, you can automatically flag conversations where the customer expressed frustration or dissatisfaction. This allows support managers to prioritize follow-ups and intervene when necessary.

Speaker and agent performance

Using speaker-labeled transcripts, call analytics can measure agent-specific metrics like script adherence, talk-to-listen ratios, and successful objection handling. This provides objective data for sales coaching.

Contact and entity information

AI models can accurately identify and extract specific entities mentioned during the call, such as names, phone numbers, email addresses, and account details. This ensures your CRM stays up to date with the correct information.

Getting started

Before you start, you'll need:

- Python installed on your machine

- An AssemblyAI API key — get one free here

- The full code is available in the project repository on GitHub

Setting up your environment

First, create a directory for the project and navigate into it. Then, create a virtual environment and activate it:

# Mac/Linux

python3 -m venv venv

. venv/bin/activate

# Windows

python -m venv venv

.\venv\Scripts\activate.batNow, install the AssemblyAI Python SDK and the requests library, which we'll use to interact with the LLM gateway:

pip install assemblyai requestsFinally, set your API key as an environment variable with the following command, where you replace <your_key> with your AssemblyAI API key copied from your dashboard:

# Mac/Linux

export ASSEMBLYAI_API_KEY=<your_key>

# Windows

set ASSEMBLYAI_API_KEY=<your_key>Transcribing the call with speech-to-text

The first step in any call analytics pipeline is converting audio to text. Create a file called main.py and add the following code to transcribe the sample call:

import assemblyai as aai

import requests

import os

# Get the API key from environment variables

api_key = os.getenv("ASSEMBLYAI_API_KEY")

if not api_key:

raise Exception("ASSEMBLYAI_API_KEY environment variable not set.")

# --- 1. Transcribe the audio file ---

config = aai.TranscriptionConfig(speech_models=["universal-3-pro"])

transcriber = aai.Transcriber()

transcript = transcriber.transcribe(

"https://storage.googleapis.com/aai-web-samples/Custom-Home-Builder.mp3",

config=config,

)

# Check for transcription errors

if transcript.status == aai.TranscriptStatus.error:

print(f"Transcription failed: {transcript.error}")

exit(1)

print(f"TRANSCRIPT:\n{transcript.text}\n")This script initializes the transcriber, submits the audio file URL for processing, and checks for any errors before printing the final text. The AssemblyAI SDK handles audio format detection automatically—you can pass URLs to MP3, WAV, M4A, and most other common formats.

Extracting call insights with the LLM gateway

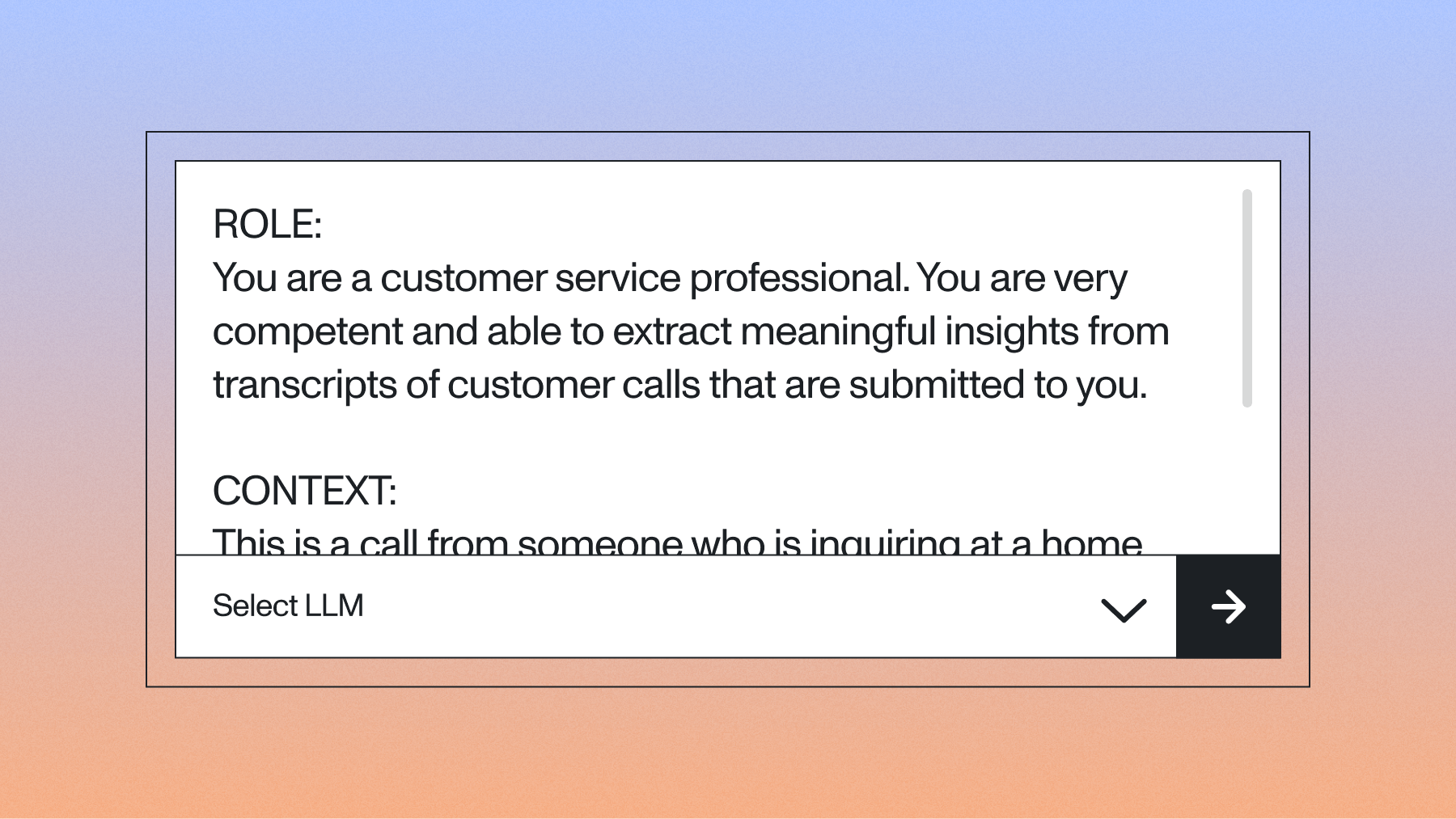

With the transcript ready, you can send it to the AssemblyAI LLM gateway for analysis. The key to accurate extraction is a well-structured prompt with clear sections for role, context, instruction, and output format.

Append the following code to your main.py file:

# --- 2. Define the prompt for the LLM Gateway ---

prompt = """

ROLE:

You are a customer service professional. You are very competent and able to extract meaningful insights from transcripts of customer calls that are submitted to you.

CONTEXT:

This is a call from someone who is inquiring at a home building company

INSTRUCTION:

Respond to the following command: "Provide a short summary of the phone call, and list any outstanding action items after the summary. Finally, provide the caller's contact information. Do not include a preamble."

FORMAT:

SUMMARY:

a one or two sentence summary

ACTION ITEMS:

a bulleted list of sufficiently detailed action items

CONTACT INFORMATION:

Name: Last name, first name

Phone number: The caller's phone number

""".strip()

# --- 3. Extract insights with the LLM Gateway ---

llm_gateway_data = {

"model": "claude-sonnet-4-6", # A fast and capable model

"messages": [

{

"role": "user",

"content": f"{prompt}\n\nTranscript: {transcript.text}"

}

],

"max_tokens": 1000

}

headers = {

"authorization": api_key

}

# Make the request to the LLM Gateway

response = requests.post(

"https://llm-gateway.assemblyai.com/v1/chat/completions",

headers=headers,

json=llm_gateway_data

)

# Check for errors

if response.status_code != 200:

print(f"LLM Gateway request failed: {response.text}")

exit(1)

# Print the result

result = response.json()

print("--- CALL INSIGHTS ---")

print(result["choices"][0]["message"]["content"].strip())Tip: For lower-cost processing, you can swap claude-sonnet-4-6 with claude-haiku-4-5-20251001, which offers near-instant responses at a lower price point.

Run the script from your terminal:

python main.pyAfter a few moments, you'll see the extracted insights:

SUMMARY:

- The caller is interested in getting an estimate for building a house on a property he is looking to purchase in Westchester.

ACTION ITEMS:

- Have someone call the customer back today to discuss building estimate.

- Set up time for builder to assess potential property site prior to purchase.

CONTACT INFORMATION:

Name: Lindstrom, Kenny

Phone number: 610-265-1715If you check the call transcript (or listen to the original call), you'll see that all of the information is accurate.

Enabling per-speaker analysis with speaker diarization

Call analytics without speaker separation treats the entire conversation as one voice. By enabling speaker diarization, you can dramatically improve the quality of agent performance analysis, sentiment breakdowns by speaker, and action item attribution.

To enable this feature, update your transcription configuration to set speaker_labels=True:

config = aai.TranscriptionConfig(speech_models=["universal-3-pro"], speaker_labels=True)

transcript = transcriber.transcribe(

"https://storage.googleapis.com/aai-web-samples/Custom-Home-Builder.mp3",

config=config

)

# Format the transcript with speaker labels for the LLM

utterances = [f"Speaker {u.speaker}: {u.text}" for u in transcript.utterances]

diarized_text = "\n".join(utterances)You can then pass this diarized_text to the LLM gateway instead of the raw transcript. This enables prompts like:

prompt = """

INSTRUCTION:

Analyze this customer service call and provide:

1. A summary of what the customer (Speaker B) requested

2. An evaluation of how well the agent (Speaker A) handled the request

3. Any commitments the agent made that require follow-up

"""Getting structured JSON output for downstream integration

Beyond text summaries, you can extract granular insights directly into structured JSON. This enables seamless integration with your database, CRM, or analytics dashboard.

To enforce JSON output, modify the FORMAT instruction in your prompt and use the response_format parameter:

prompt = """

ROLE:

You are a customer service analyst extracting structured data from calls.

CONTEXT:

This is a customer inquiry call.

INSTRUCTION:

Extract the following information from the transcript.

FORMAT:

Respond with a valid JSON object containing these keys:

- "summary": A one-sentence summary of the call

- "action_items": An array of action item strings

- "sentiment": One of "positive", "neutral", or "negative"

- "contact": An object with "name" and "phone" keys

""".strip()

llm_gateway_data = {

"model": "claude-sonnet-4-6",

"messages": [

{

"role": "user",

"content": f"{prompt}\n\nTranscript: {diarized_text}"

}

],

"response_format": {"type": "json_object"},

"max_tokens": 1000

}

The LLM gateway will return a properly formatted JSON string that you can parse directly:

import json

result = response.json()

insights = json.loads(result["choices"][0]["message"]["content"])

# Now you can access structured data

print(f"Sentiment: {insights['sentiment']}")

print(f"Action items: {len(insights['action_items'])}")See the API reference for more details on response formatting options.

Error handling and performance optimization

The AssemblyAI SDK raises aai.errors.APIError for authentication or network failures—but the API call can succeed while the transcription job itself fails, so you must always check transcript.status separately.

Here's a robust error handling pattern that covers both surfaces:

import requests

try:

transcript = transcriber.transcribe(AUDIO_URL)

if transcript.status == aai.TranscriptStatus.error:

raise Exception(f"Transcription failed: {transcript.error}")

# Assuming 'headers' and 'llm_gateway_data' are defined

response = requests.post(

"https://llm-gateway.assemblyai.com/v1/chat/completions",

headers=headers,

json=llm_gateway_data

)

response.raise_for_status() # Raises an HTTPError for bad responses (4xx or 5xx)

result = response.json()

# ... process result ...

except aai.errors.APIError as e:

# Handles SDK-specific API errors

print(f"AssemblyAI API Error: {e}")

except requests.exceptions.RequestException as e:

# Handles network errors for the LLM Gateway request

print(f"LLM Gateway Request Error: {e}")

except Exception as e:

# Handles other errors, including transcription job failures

print(f"An error occurred: {e}")Scaling call analytics for production

For high call volumes, avoid blocking on transcription by providing a webhook_url—AssemblyAI will POST to your endpoint when the job completes:

config = aai.TranscriptionConfig(

speaker_labels=True,

webhook_url="https://your-api.com/webhooks/assemblyai"

)

# transcribe_async returns immediately with a transcript ID

transcript = transcriber.transcribe_async(

"https://storage.googleapis.com/aai-web-samples/Custom-Home-Builder.mp3",

config=config

)

print(f"Submitted job: {transcript.id}")Here's the recommended pattern for a resilient, event-driven system:

You now have the fundamental building blocks to transcribe and understand phone calls at scale. Try our API for free to begin extracting insights from your own audio data, or test the LLM gateway without writing any code in our Playground.

Use cases for call analytics

The call analytics pipeline you built in this tutorial applies directly to several common production use cases:

- Lead intelligence: Automatically capture contact details, interest level, and next steps from sales calls

- Conversational data analysis: Identify trends across thousands of calls—common objections, frequently asked questions, product feedback

- Sales coaching: Use diarized transcripts to analyze talk ratios, objection handling, and script adherence

- Compliance monitoring: Verify required disclosures were made and flag calls that need review

- Customer support triage: Route insights to the right team and prioritize follow-ups based on sentiment. This is an impactful use case, as a 2025 survey found that 69% of companies reported improved customer service after implementing conversation intelligence.

To learn more about potential use cases for extracting call insights, check out this blog on AI-powered call analytics.

Frequently asked questions about call analytics in Python

How do I extract per-speaker insights from a call?

Set speaker_labels=True in your TranscriptionConfig to get utterances labeled by speaker, then format them as "Speaker A: {text}" before passing the transcript to the LLM gateway. From there, you can prompt the LLM to evaluate each speaker independently—agent performance, customer sentiment, or both.

How do I handle different audio formats?

The AssemblyAI Python SDK supports most audio and video formats automatically, with FLAC or 16-bit PCM WAV recommended for optimal accuracy.

What's the best way to process very long phone calls?

Voice conversations generate roughly 125-150 tokens per minute, so calls longer than an hour can exceed most LLM context windows—break transcripts into chunks by speaker turn or fixed time intervals before sending to the LLM gateway. According to technical guidance, chunking by speaker turn preserves the most conversational context.

How can I get structured JSON output for CRM or database integration?

You can guarantee JSON output by explicitly requesting it in your prompt's formatting instructions and setting the response_format parameter to {"type": "json_object"} in your LLM gateway API request. This ensures the response can be safely parsed using json.loads().

How can I improve the accuracy of extracted insights?

Structure your prompt with explicit ROLE, CONTEXT, and FORMAT sections, and use few-shot examples for complex extraction tasks. To reduce hallucinations, ground the LLM's responses in verified data using retrieval-augmented generation (RAG).

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.