OpenAI Whisper for developers: Choosing between API, local, or server-side transcription

In this 3-part blog series, we’ll explore three ways to integrate OpenAI’s Whisper into your JavaScript apps—API, browser-based, or self-hosted. We’ll also compare trade-offs in speed, privacy, and flexibility.

OpenAI's Whisper is a powerful open-source, open-weight speech-to-text model that offers impressive accuracy for audio transcription tasks. Released in September 2022, Whisper has gained significant popularity in the developer community due to its accuracy (exceeding many commercial providers) and open-source nature.

These competing models (wav2vec 2.0 uses contrastive learning on audio segments, HuBERT clusters speech units using hidden representations, and XLS-R extends this to multiple languages) typically require domain-specific fine-tuning. In contrast, Whisper takes a fundamentally different approach.

OpenAI researchers trained Whisper using large-scale weak supervision on 680,000 hours of audio paired with transcripts collected from the internet. "Weak supervision" means the training data wasn't perfectly labeled or curated by humans but included diverse, real-world audio with corresponding text that might contain noise or inaccuracies.

The research paper mentions the following sources of data that might have been used for the training: YouTube videos with user-generated captions, podcast episodes with transcripts, TED Talks, and other conference presentations with professional transcriptions, audiobooks, news broadcasts with captions, etc.

The research team invested significant effort into data quality control, implementing sophisticated filtering mechanisms, including:

- Heuristic detection to remove machine-generated transcripts

- Language detection to ensure that the spoken language matches the transcript language

- Text analysis to verify natural language features (punctuation, capitalization)

- Fuzzy deduplication to reduce repetitive content

- Alignment verification to ensure transcripts match their audio

This meticulous data control significantly contributed to Whisper's performance. This approach offers several advantages:

- Inherent robustness: This diverse collection of real-world audio from varying environments, speakers, and recording conditions allowed Whisper to learn to handle real-world audio variations better than models trained on clean, controlled datasets. It constitutes its robustness compared to models trained on more controlled datasets.

- No fine-tuning required: Unlike models that need additional training for specific domains, Whisper works well out-of-the-box across different scenarios and audio types, allowing users to start using the model without any knowledge of model fine-tuning.

- Multitask capabilities: The training process enabled Whisper to learn multiple related tasks simultaneously—transcription in different languages, translation, language detection, and more—all within a single model, making it a go-to tool for a wide variety of tasks.

These characteristics unlock capabilities previously out of reach for most projects. By providing a single model that works exceptionally well without domain-specific training, Whisper democratizes advanced speech recognition technology. For JavaScript developers, this means being able to integrate sophisticated audio understanding into web applications and Node.js services where the complexity or cost of commercial APIs was previously prohibitive, or where existing open-source solutions fell short. This opens the door to entirely new categories of speech-enabled applications—from accessibility tools to interactive voice interfaces—all with near commercial-grade accuracy in an open-source package that can be deployed across various JavaScript environments.

While Whisper democratizes speech recognition, production applications often require additional considerations—from handling edge cases and maintaining consistent accuracy across diverse audio conditions to ensuring proper formatting and reducing hallucinations. These nuanced challenges are where the difference between a proof-of-concept and a production-ready solution becomes apparent.

When implementing Whisper in JavaScript applications, developers have several integration options, each suited to different project requirements:

- OpenAI Whisper API. The most straightforward approach for JavaScript developers who prioritize simplicity. This REST API offers transcriptions and multilingual translations and works with audio files up to 25 MB and real-time audio streams. Advanced capabilities include prompting audio with additional context, available at approximately $0.006 per minute of audio. Note that as of May 2025, OpenAI does not offer a service-level agreement—when this is a requirement, the Microsoft Azure offering provides both Latency and Availability SLAs for their Azure OpenAI API.

- Browser-based implementation. JavaScript developers can run Whisper directly in the browser using WebAssembly and TensorFlow.js, enabling offline functionality and preserving user privacy by processing audio locally.

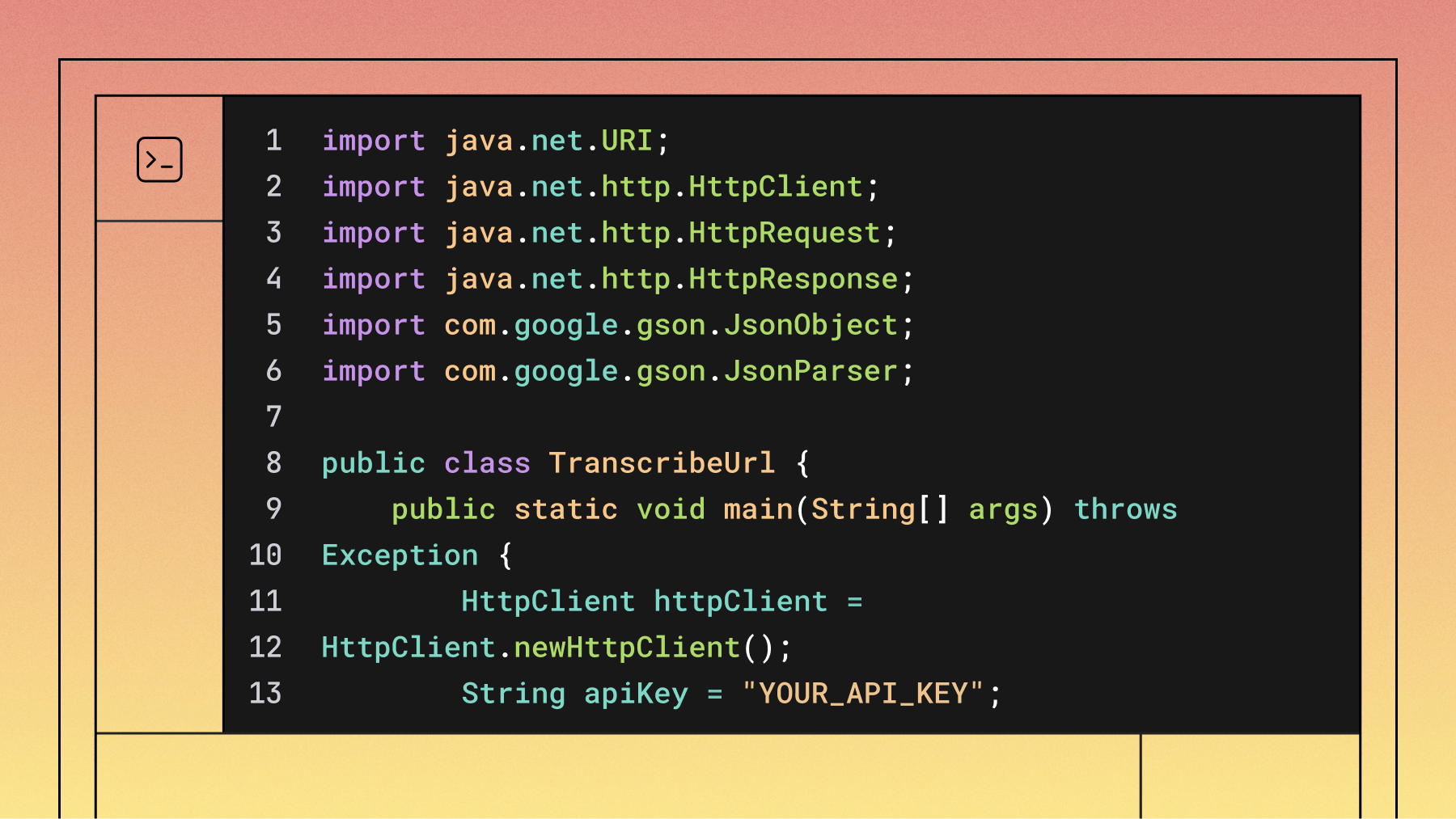

- Server-side integration. For more processing power and flexibility, Whisper can be self-hosted in a Node.js backend application using libraries like node-whisper or through custom integrations with the underlying Python model.

Each option has trade-offs between latency, privacy, cost, and infrastructure needs.

In our upcoming blog series, we’ll be offering practical guidance for implementing each approach, complete with code examples, performance considerations, and real-world application scenarios. We'll explore how to choose the right implementation strategy based on your specific requirements for latency, cost, and deployment constraints.

Suggested Reads

- How to Build a JavaScript Audio Transcript Application

- Node.js Speech-to-Text with Punctuation, Casing, and Formatting

- Transcribe and generate subtitles for YouTube videos with Node.js

- Transcribe an audio file with Universal-1 in Node.js

- Summarize audio with LLMs in Node.js

- How To Convert Voice To Text Using JavaScript

- Filter profanity from audio files using Node.js

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.