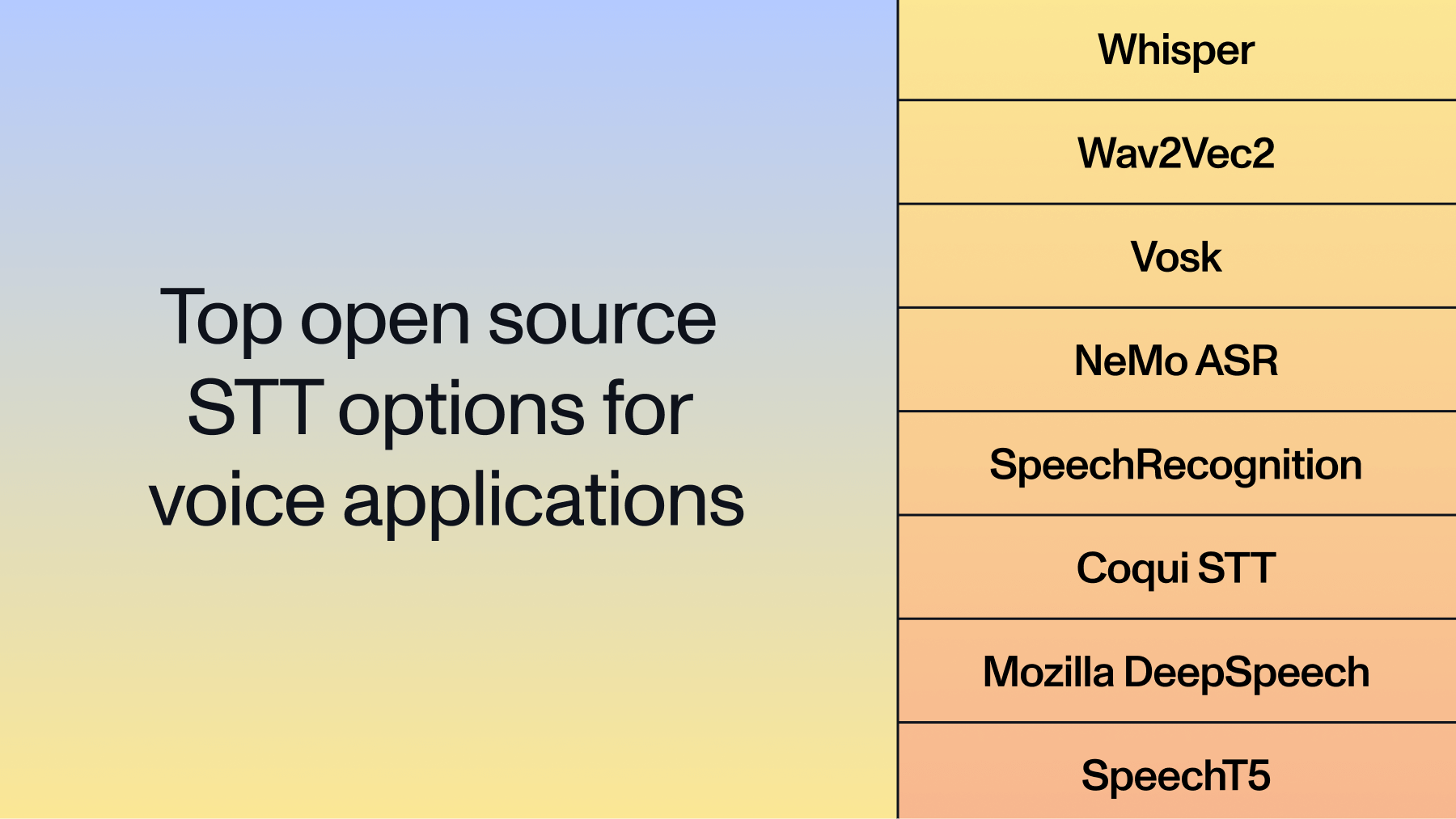

Top 8 open source STT options for voice applications in 2026

This comprehensive comparison examines eight open source STT solutions, analyzing their technical capabilities, implementation requirements, and ideal use cases to help you build voice applications from scratch.

Choosing an open source STT (speech-to-text) model today brings different trade-offs in accuracy, real-time performance, language support, and deployment complexity. According to AssemblyAI research on 455 voice agent builders, 52.5% cite accuracy and misunderstandings as their top building challenge—and real-world WER often runs two to three times worse than clean benchmark scores. Every option on this list will require extensive development—often weeks to months—before it is production-ready. Some excel at offline processing, others dominate streaming scenarios, and a few offer extensive customization for specific domains.

This comprehensive comparison examines eight open source STT solutions, analyzing their technical capabilities, implementation requirements, and ideal use cases to help you build voice applications from scratch.

What is open source speech recognition

Open source speech recognition is a category of Voice AI models whose weights, architecture, and code are publicly available—meaning developers can download, run, and modify them without licensing fees or API dependencies. Instead of sending audio to a third-party service, you run inference directly on your own hardware, keeping full control over your data pipeline.

This approach gives you complete control over your data pipeline, as audio never leaves your infrastructure. As recent research highlights, this offline capability solves major privacy and compliance hurdles for healthcare or financial applications by ensuring better data control. You also get the freedom to fine-tune AI models on your specific domain data, something commercial APIs rarely allow.

But that control comes with real operational overhead. You're responsible for:

- Provisioning and managing GPU infrastructure

- Building the inference API layer

- Handling audio preprocessing and format conversion

- Managing model versioning and updates

- Scaling to meet traffic demands

How to evaluate open source speech recognition models

Evaluating Voice AI models requires more than benchmark scores on clean audio. You need to test against your actual audio data to understand real-world performance. Start by building a diverse test set that mirrors your production environment—then measure Word Error Rate (WER) across each condition to find where the model breaks down.

Your test set should include:

- Background noise from your target environment (office, call center, mobile)

- Overlapping speakers and crosstalk

- Heavy accents and regional dialects

- Domain-specific terminology relevant to your use case

- Varying audio quality levels, including compressed or low-bandwidth recordings

Key evaluation metrics

Word Error Rate (WER) measures the percentage of words transcribed incorrectly. A WER of 10% means roughly one in ten words contains an error—either a substitution, deletion, or insertion. Lower is better, but context matters: a 15% WER on challenging call center audio might outperform a 10% WER on clean podcast recordings.

Real-time factor (RTF) indicates whether a model can process audio faster than it's spoken. An RTF of 0.5 means the model transcribes audio twice as fast as real-time—critical for streaming applications. An RTF above 1.0 means the model can't keep up with live audio.

Latency measures the delay between audio input and text output. For real-time voice assistants, this is where most open source setups fail. AssemblyAI's analysis of production voice agents identifies a 300ms end-to-end response threshold as the breaking point above which conversations start to feel unnatural. Batch processing applications can tolerate much higher latency, but any conversational interface should design its STT layer around that budget from day one.

Testing methodology

Don't rely solely on published benchmarks. Create test audio that represents your actual use case:

- Record samples from your target environment (call center, meeting room, mobile app)

- Include edge cases like accented speech, technical jargon, and crosstalk

- Test with varying audio quality levels (compression artifacts, bandwidth limitations)

- Measure performance degradation as conditions worsen

Beyond accuracy, evaluate resource consumption before committing to a model. A solution that delivers excellent transcripts but requires massive GPU clusters may not be economically viable at scale.

Memory usage

How much RAM or VRAM does the model require at inference time?

CPU/GPU utilization

Can the model run on available hardware, or does it require expensive GPU provisioning?

Cost per audio hour

What does it actually cost to process audio at your expected volume, including infrastructure?

Understanding open source STT requirements

Modern voice applications demand more than basic transcription. They need systems that handle real-world audio conditions while maintaining acceptable performance across diverse hardware environments.

Accuracy under pressure

Real applications encounter background noise, overlapping speakers, varied accents, and technical terminology. The best open source solutions maintain performance despite these challenges—not just on clean benchmark audio. Pay particular attention to entity accuracy: how reliably the model transcribes names, email addresses, phone numbers, account numbers, and medical terms. These are the tokens that actually break downstream workflows—an LLM can recover from a misheard filler word, but it cannot recover from a wrong email address or credit card number.

Resource efficiency

Some models demand high-end GPUs, others run efficiently on standard CPUs, and a few operate on edge devices with minimal resources. Your deployment environment will determine which trade-off is acceptable.

Customization capability

Healthcare applications need medical terminology accuracy. Customer service tools may require sentiment detection. The most valuable open source solutions support fine-tuning so you can optimize for your specific domain.

Technical comparison matrix

The following table compares key performance metrics across eight open source STT solutions. WER (Word Error Rate) indicates transcription errors—lower percentages mean better accuracy. Model size affects memory requirements and inference speed, while hardware requirements determine deployment flexibility.

Detailed solution analysis

1. OpenAI Whisper

Architecture

Transformer-based encoder-decoder with attention mechanisms

Training data

680,000 hours of multilingual audio from the web, as detailed in the original paper

Whisper's robustness comes from massive, diverse training data. The model handles accented speech, background noise, and technical terminology well; in fact, research findings show it outperforms other models in high-noise environments. Its multilingual capability works zero-shot—no additional training needed for new languages.

Strengths:

- Strong WER performance (10-30%) across challenging audio conditions

- Built-in punctuation, capitalization, and timestamp generation

- Multiple model sizes balancing accuracy vs. speed

- Strong performance on domain-specific terminology

Limitations:

- Whisper itself is batch-only—teams that need true streaming either invest significant engineering effort adapting it, switch to a natively streaming model like Vosk, or move to a managed API built on Universal-3 Pro like AssemblyAI.

- Larger models need substantial GPU memory.

- Batch processing introduces latency for interactive apps.

Best for: Applications prioritizing accuracy over real-time requirements.

2. Wav2Vec2

Architecture

Self-supervised transformer learning speech representations

Training approach

Unsupervised pre-training + supervised fine-tuning

Wav2Vec2's self-supervised approach learns from unlabeled audio, making it highly effective with limited labeled training data. This architecture excels at fine-tuning for specific domains or accents. For example, one notable study demonstrated that after pre-training, the model achieved strong results on a key benchmark with only ten minutes of labeled fine-tuning data.

Strengths:

- Good streaming performance (requires adaptations like wav2vec-S)

- Strong fine-tuning results with custom data

- Multiple pre-trained checkpoints for different use cases

- Efficient inference on modern GPUs

Limitations:

- Requires streaming adaptations for optimal real-time performance

- Setup complexity higher than plug-and-play solutions

- Requires GPU for optimal real-time performance

- Limited built-in language detection capabilities

Best for: Real-time applications requiring customization.

3. Vosk

Architecture

Kaldi-based DNN-HMM hybrid system optimized for efficiency

Focus

Lightweight deployment with reasonable accuracy

Vosk prioritizes practical deployment over cutting-edge accuracy. Its efficient implementation and compact model sizes make it viable for resource-constrained environments while maintaining acceptable transcription quality.

Strengths:

- Compact model sizes (50MB-1.5GB)

- CPU-only operation with good performance

- True offline capability without internet dependency

- Simple integration with multiple programming languages

Limitations:

- WER performance trails transformer-based models on challenging audio

- Limited advanced features like Speaker Diarization

- Fewer language options than larger frameworks

Best for: Mobile applications, embedded systems, and offline voice interfaces where resource efficiency matters more than perfect accuracy.

4. NVIDIA NeMo ASR

Architecture

Conformer and Transformer models with extensive optimization

Focus

Comprehensive tooling for enterprise deployment

NeMo offers extensive customization capabilities through complete pipelines from data preparation through model deployment.

Strengths:

- Good WER performance (6-20%) with optimized architectures; the Canary model achieves a published 6.67% WER. Note: the current #1 position on the Hugging Face Open ASR Leaderboard is held by AssemblyAI's Universal-3 Pro, a commercial model—NeMo Canary remains a top open source option but is not the absolute state of the art.

- Comprehensive training and deployment infrastructure

- Good streaming performance with batching support

- Good documentation and community support

- Models range from 100M parameters (Parakeet-TDT-110M) to 1.1B+ parameters

Limitations:

- Steep learning curve requiring ML expertise

- GPU infrastructure essential for training and inference

- Complex setup may be overkill for simple applications

Best for: ML experts who want serious customization.

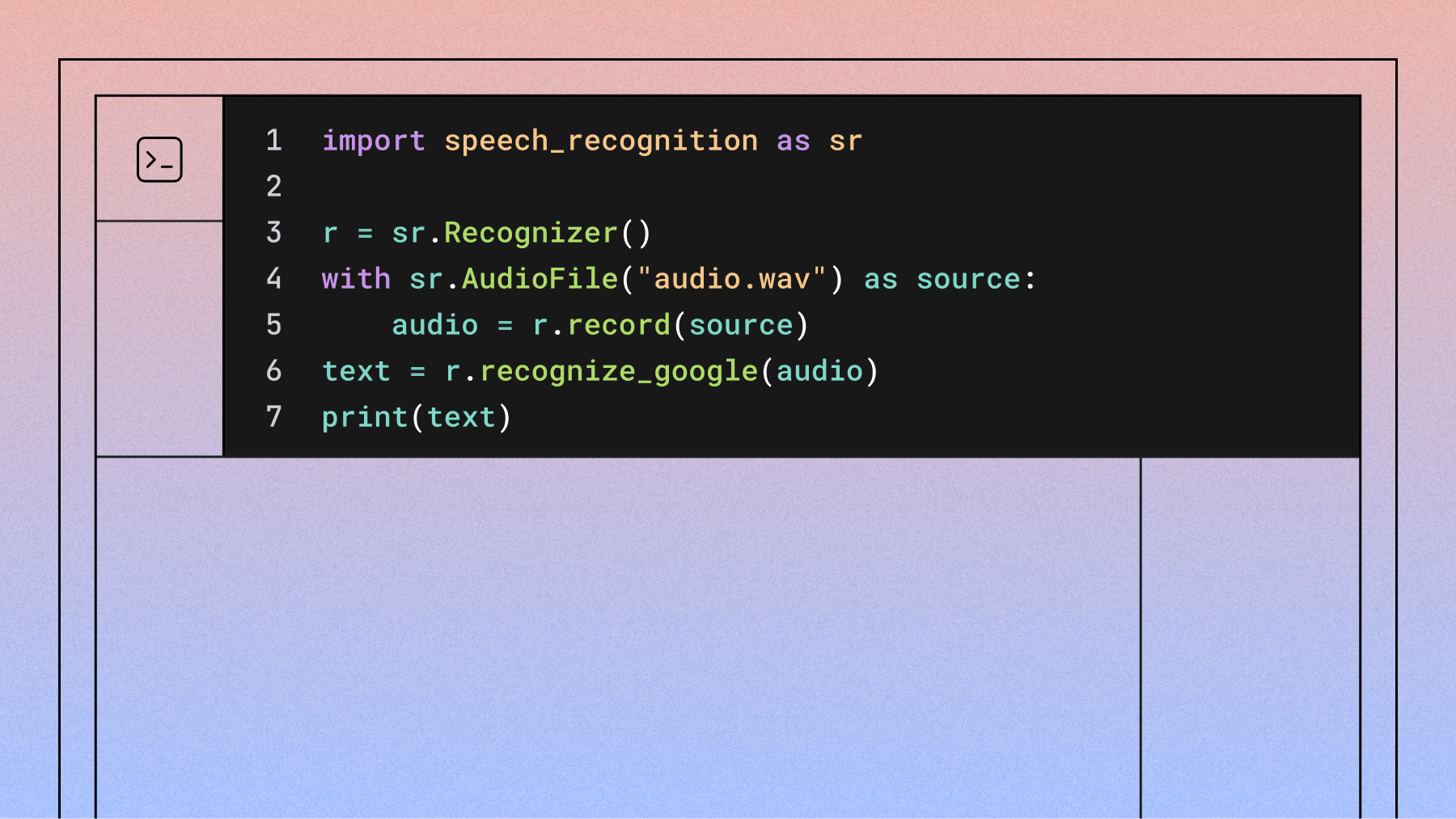

5. SpeechRecognition Library

Architecture

Unified interface to multiple recognition engines

Purpose

Rapid prototyping and educational use

The SpeechRecognition library abstracts different speech recognition services behind a simple API. While not the most accurate option, its simplicity makes it invaluable for quick experiments and learning.

Strengths:

- Extremely simple API requiring minimal code

- Multiple backend options (CMU Sphinx, Google, etc.)

- No GPU requirements or complex dependencies

- Perfect for educational projects and rapid testing

Limitations:

- WER significantly higher than modern deep learning models

- Limited customization and advanced features

- Dependence on external services for best performance

Best for: Learning projects, proof-of-concept development, and situations where perfect accuracy isn't critical.

6. Coqui STT

Architecture

Improved DeepSpeech with community enhancements

Development model

Community-driven with open development and regular updates

Originally a fork of Mozilla DeepSpeech, Coqui STT was developed by a community-driven team. However, Coqui ceased its cloud services and active development on its STT models in late 2023. While the open-source repositories remain available, they are no longer actively maintained or supported, making them a risky choice for new production systems.

Historical Strengths:

- Offered active community development with regular improvements.

- Provided better models and tooling than the original DeepSpeech.

- Documentation remains valuable for educational purposes.

- The training pipeline, though no longer maintained, is a useful reference for custom model training approaches.

Current Limitations:

- Project is no longer actively maintained or supported.

- WER lags significantly behind modern transformer models.

- Not recommended for new production use due to lack of updates and support.

- Smaller community compared to tech giant projects.

Best for: Historical reference or educational purposes. Not recommended for new projects.

7. Mozilla DeepSpeech

Architecture

RNN-based Deep Speech implementation

Status

Discontinued project

Mozilla formally discontinued DeepSpeech in November 2021, archiving the repository. The project is no longer maintained, though the code remains available for reference and educational purposes.

Historical Strengths:

- Complete local processing ensuring data privacy

- TensorFlow Lite support for mobile deployment

- Clear documentation valuable for learning

- Established training pipeline for custom models

Current Limitations:

- Project officially discontinued and archived

- No ongoing development or security updates

- WER significantly lower than modern approaches

- Limited language support and model updates

Best for: Educational projects studying older architectures, historical reference, or scenarios where the existing codebase meets specific legacy requirements (not recommended for new projects).

8. SpeechT5

Architecture

Unified transformer for speech-to-text and text-to-speech

Research focus

Experimental unified speech processing

Microsoft's SpeechT5 represents research into unified speech processing frameworks. While primarily academic, it demonstrates interesting capabilities for applications requiring both transcription and synthesis.

Strengths:

- Unified approach to multiple speech tasks

- Strong research foundation and documentation

- Interesting architectural innovations

- Good performance on clean, controlled audio

Limitations:

- High computational requirements limiting practical deployment

- Limited production tooling and support (primarily academic)

- Requires significant expertise for effective implementation

- Not optimized for real-time applications

Best for: Research applications, experimental development, and scenarios exploring unified speech processing approaches.

Implementation decision framework

Choosing the optimal open source STT solution requires balancing multiple factors against your specific requirements.

- Start with accuracy requirements

- Consider real-time needs carefully

- Evaluate resource constraints early

- Plan for customization needs

- Consider maintenance overhead

Production deployment considerations

Moving from evaluation to production requires attention to operational details that academic comparisons often overlook.

Model serving architecture affects scalability and costs. Some solutions integrate naturally with standard web frameworks, others benefit from specialized inference servers. Consider whether you need request batching, model caching, or load balancing for expected traffic patterns.

Audio preprocessing often determines real-world performance more than model choice. Proper noise reduction, volume normalization, and silence detection can dramatically improve WER regardless of your selected solution.

Error handling strategies become critical in production environments. Plan for network interruptions, malformed audio input, and edge cases that can break transcription pipelines. Implement graceful degradation rather than hard failures.

Performance monitoring helps maintain service quality over time. Track WER metrics, processing latency, and resource utilization to identify issues before they affect end users. Consider implementing A/B testing frameworks for model updates.

Data pipeline optimization impacts both accuracy and costs. Efficient audio format handling, proper sampling rate management, and smart chunking strategies can reduce processing costs while improving results.

When open source might not be enough

Open source STT solutions excel in many scenarios, but certain requirements push teams toward a managed commercial model. The most common trigger is entity accuracy in production—when an error on a name, email, phone number, account number, or medical term has a direct business consequence, the additional development time and infrastructure costs of self-hosting rarely justify the savings.

Commercial services typically provide higher accuracy through access to larger, more diverse training datasets, and foundational research confirms that word error rate can be halved for every 16x increase in training data. AssemblyAI's Universal-3 Pro currently holds the #1 position on the Hugging Face Open ASR Leaderboard and is specifically tuned for the entity-heavy tokens—email addresses, phone numbers, proper nouns—that determine whether a downstream LLM responds to the right input. Managed APIs also ship advanced Speech Understanding features (Speaker Diarization, Sentiment Analysis, PII Redaction) out of the box, along with an LLM Gateway for summarization and question answering without managing another stack.

For applications where transcription quality determines user experience—accessibility services, customer support analytics, clinical workflows, voice agents—commercial speech recognition delivers reliability across real-world conditions that open source alternatives struggle to match consistently. Companies like Spotify and CallRail rely on AssemblyAI to power their products without managing complex infrastructure, and customers building voice agents on the AssemblyAI Voice Agent API get STT, LLM, and TTS from a single WebSocket instead of stitching together three providers.

If you want to skip the infrastructure complexity while maintaining high accuracy, try AssemblyAI's API for free to see how Universal-3 Pro compares to your open source evaluations on your own audio.

Final recommendations

Today's open source STT landscape provides genuine alternatives to commercial services for most voice application needs. The key lies in matching solution capabilities to your specific requirements rather than defaulting to the most popular option.

For maximum accuracy: Choose Whisper when transcription quality matters more than real-time performance. Its robustness across languages and audio conditions justifies the batch processing limitation for many use cases.

For real-time applications: Wav2Vec2 (with proper streaming adaptations) offers the best balance of accuracy and streaming performance, especially when fine-tuned for your specific domain. NeMo ASR provides even better accuracy but requires more infrastructure investment.

For resource-efficient deployment: Vosk delivers surprisingly good results for its computational requirements. It's the clear choice for mobile, embedded, or high-volume applications where efficiency trumps perfect accuracy.

For rapid development: SpeechRecognition gets basic functionality working immediately, making it perfect for prototyping and proof-of-concept development.

Speech recognition represents just one component of an effective voice application. Consider your broader architecture, user experience requirements, and team expertise when selecting solutions—the best technical choice means nothing if your team can't implement and maintain it.

Frequently asked questions about open source speech recognition

How do accuracy metrics translate to real-world performance?

Word Error Rate (WER) on academic benchmarks rarely reflects production performance—a model that scores well on clean audio can degrade significantly with background noise, accents, or crosstalk. Always test against your own audio data before committing to a model.

What are the prerequisites for implementing open source Voice AI models?

You need machine learning engineering expertise to deploy these models effectively—your team must understand GPU infrastructure, inference APIs, audio preprocessing, and model versioning. Expect weeks to months of development time before reaching production readiness.

When do commercial solutions make more sense than open source?

Commercial APIs are usually the better choice when your team lacks ML expertise, you need advanced features like Speaker Diarization out of the box, or you're working under tight deadlines. The total cost of ownership for open source—infrastructure, engineering time, and ongoing maintenance—often exceeds API costs once you factor in everything.

Can I fine-tune open source models for my specific domain?

Yes—Wav2Vec2 and NeMo ASR offer the most robust fine-tuning support, while Whisper's fine-tuning capabilities are more limited. You'll need labeled audio data from your domain, GPU resources for training, and familiarity with transfer learning.

How do I handle streaming transcription with models that only support batch processing?

Common workarounds include chunking audio into small segments for sequential processing, using third-party streaming wrappers, or switching to a model like Vosk that natively supports streaming. Each approach carries latency and accuracy trade-offs worth testing against your specific requirements.

When should I switch from self-hosted Whisper to a managed API?

Three triggers most commonly drive the switch: (1) entity accuracy problems in production—emails, phone numbers, account numbers, or medical terms coming back wrong in ways that break downstream workflows; (2) the need for real-time streaming, where Whisper's batch-only design forces expensive adaptations; and (3) total cost of ownership—GPU infrastructure, ML engineering time, and on-call burden—exceeding what a per-hour managed API would charge. If any of these apply, benchmark a managed API built on a state-of-the-art model (such as AssemblyAI's Universal-3 Pro) against your own audio before committing further engineering to the self-hosted path.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.