What is speaker diarization and how does it work? (Complete 2026 Guide)

In this blog post, we'll take a closer look at how speaker diarization works, why it's useful, some of its current limitations, and how to easily use it on audio/video files.

Speaker diarization powers the technology that automatically identifies "who spoke when" in conversations. As a recent market survey reveals, 76% of companies now embed conversation intelligence in more than half of their customer interactions, making it essential to understand this technology—whether you're analyzing customer calls, transcribing meetings, or building voice AI applications. This comprehensive guide explores how speaker diarization works, why it's essential for modern voice applications, and how to implement it effectively.

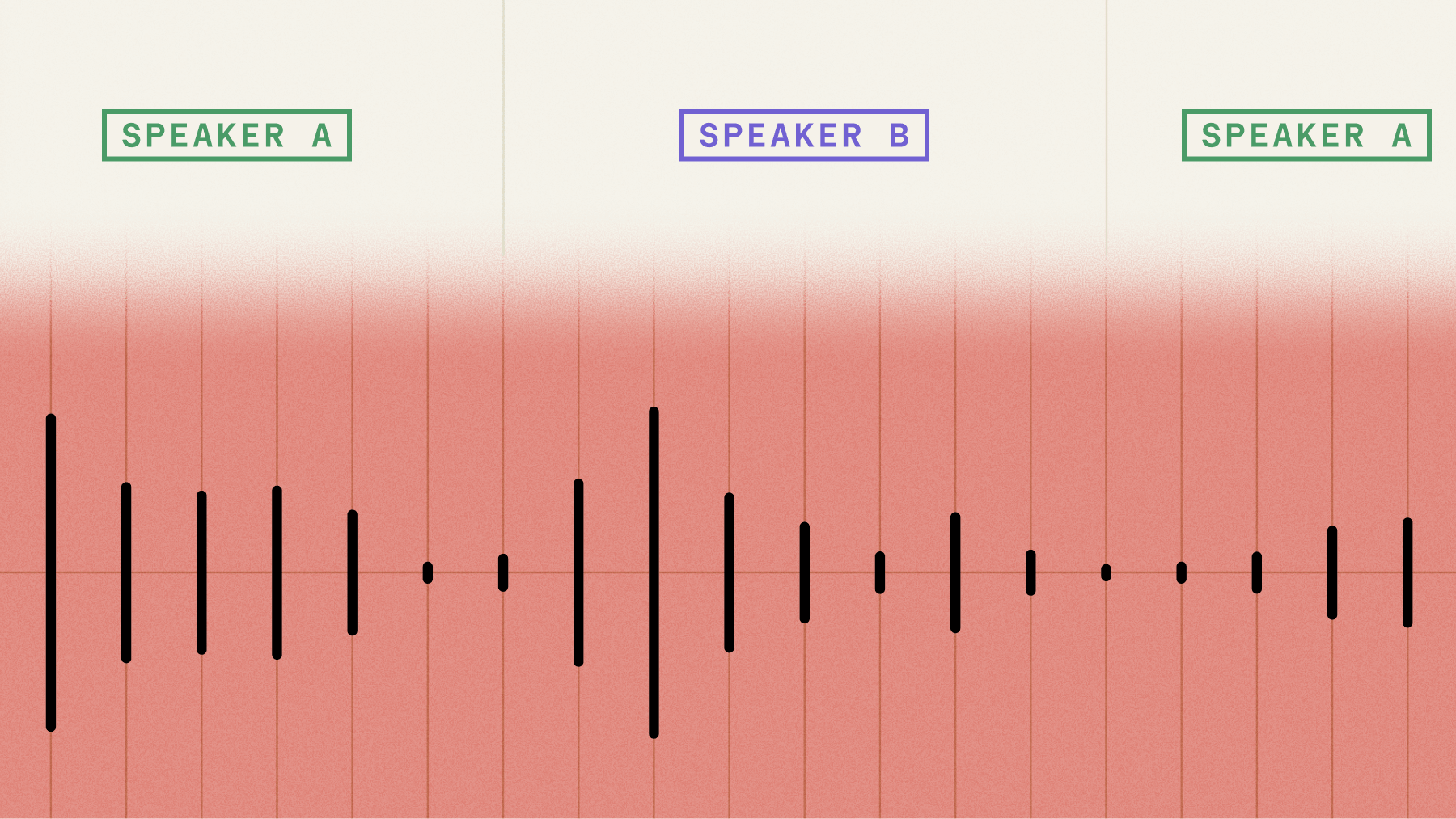

What is speaker diarization?

Speaker diarization is an AI process that automatically identifies who spoke when in audio recordings containing multiple speakers. It assigns speaker labels like "Speaker A" and "Speaker B" to each word or segment, transforming unstructured conversations into organized, speaker-attributed transcripts.

In Automatic Speech Recognition (ASR), this involves two key functions: detecting the number of unique speakers and attributing each word to its speaker.

Speaker diarization performs two key functions:

- Speaker Detection: Identifying the number of distinct speakers in an audio file.

- Speaker Attribution: Assigning segments of speech to the correct speaker.

The result is a transcript where each segment of speech is tagged with a speaker label (e.g., "Speaker A," "Speaker B"), making it easy to distinguish between different voices.

Beyond generic labels, you can also use Speaker Identification to replace labels like "Speaker A" with meaningful identifiers like actual names ("John Smith") or roles ("Customer," "Agent"). This feature analyzes the conversational context to intelligently assign the correct identity to each speaker, transforming a simple diarized transcript into a fully attributed conversation.

Why is speaker diarization useful?

Speaker diarization transforms unreadable transcript walls into meaningful conversations. Key benefits include:

- Improved readability: Clear speaker attribution eliminates confusion about who said what

- Time savings: No manual effort required to assign speaker labels

- Mental clarity: Reduces cognitive load when processing conversation data

For example, let's look at the before and after transcripts below with and without speaker diarization:

Without:

But how did you guys first meet and how do you guys know each other? I actually met her not too long ago. I met her, I think last year in December, during pre season, we were both practicing at Carson a lot. And then we kind of met through other players. And then I saw her a few her last few torments this year, and we would just practice together sometimes, and she's really, really nice. I obviously already knew who she was because she was so good. Right. So. And I looked up to and I met her. I already knew who she was, but that was cool for me. And then I watch her play her last few events, and then I'm actually doing an exhibition for her charity next month. I think super cool. Yeah. I'm excited to be a part of that. Yeah. Well, we'll definitely highly promote that. Vania and I are both together on the Diversity and Inclusion committee for the USDA, so I'm sure she'll tell me all about that. And we're really excited to have you as a part of that tournament. So thank you so much. And you have had an exciting year so far. My goodness. Within your first WTI 1000 doubles tournament, the Italian Open.Congrats to that. That's huge. Thank you.

With:

Speaker A: But how did you guys first meet and how do you guys know each other?

Speaker B: I actually met her not too long ago. I met her, I think last year in December, during pre season, we were both practicing at Carson a lot. And then we kind of met through other players. And then I saw her a few her last few torments this year, and we would just practice together sometimes, and she's really, really nice. I obviously already knew who she was because she was so good.

Speaker A: Right. So.

Speaker B: And I looked up to and I met her. I already knew who she was, but that was cool for me. And then I watch her play her last few events, and then I'm actually doing an exhibition for her charity next month.

Speaker A: I think super cool.

Speaker B: Yeah. I'm excited to be a part of that.

Speaker A: Yeah. Well, we'll definitely highly promote that. Vania and I are both together on the Diversity and Inclusion committee for the USDA. So I'm sure she'll tell me all about that. And we're really excited to have you as a part of that tournament. So thank you so much. And you have had an exciting year so far. My goodness. Within your first WTI 1000 doubles tournament, the Italian Open. Congrats to that. That's huge.

Speaker B: Thank you.

See how much easier the transcription is to read with speaker diarization?

Speaker diarization enables powerful conversation analytics. Industry research shows that analytics and intelligence are now the most common use cases for speech understanding.

Analytics capabilities include:

- Individual speaker behavior analysis

- Pattern recognition across conversations

- Predictive insights from conversation data

Real-world examples:

- A call center might analyze agent messages versus customer requests, or complaints, to identify trends that could help facilitate better communication. This provides a significant advantage over manual methods; recent analysis shows that traditional, manual call sampling captures less than 2 percent of all customer interactions.

- A podcast service might use speaker labels to identify the host and guest, making transcriptions more readable for end users.

- A telemedicine platform might identify doctor and patient to create an accurate transcript for an EHR system. This is a critical function, as research shows an accurate record of the conversation is essential, with patient history contributing to 76% of initial diagnoses.

How does speaker diarization work?

Speaker diarization applies speaker labels ("Speaker A," "Speaker B") to each transcribed word. Modern AI models execute this through four core steps:

- Step 1: Audio Segmentation

- Step 2: Speaker Embedding Generation

- Step 3: Speaker Count Estimation

- Step 4: Clustering and Assignment

Step 1: Audio segmentation

Audio segmentation divides recordings into utterances of 0.5-10 seconds each. AI models need sufficient audio data to identify speakers accurately.

Example utterances:

- Utterance 1: "Hello my name is Cindy"

- Utterance 2: "I like dogs and live in San Francisco"

Each utterance gets assigned to a speaker label during the clustering process.

There are many ways to break up an audio/video file in a set of utterances, with one common way being to use silence and punctuation markers. As internal research shows, there is a measurable drop-off in the ability to correctly assign an utterance to a speaker when utterances are less than one second.

Step 2: Speaker embedding generation

Each utterance passes through an AI model that generates speaker embeddings. As academic research shows, these embeddings—high-dimensional numerical representations of unique speaker characteristics—became a standard approach after i-vectors found great success in speaker recognition.

The visualization below shows how embeddings capture speaker features:

We do a similar process to convert not words, but segments of audio, into embeddings as well.

Step 3: Speaker count estimation

Next, we need to make a choice about how many speakers are present in the audio file—this is a key feature of a modern speaker diarization model. Legacy Diarization systems required knowing how many speakers were in an audio/video file ahead of time, but a major benefit of modern models is that they can accurately predict this number.

Our first goal here is to overestimate the number of speakers. Through clustering methods, we want to estimate the highest number of speakers that is reasonably possible. Why overestimate? It's much easier to combine speakers' utterances if the model breaks them up into different speaker labels than it is to disentangle two speakers being combined into one.

After this initial step, we go back and combine speakers, or disentangle speakers, as needed to get an accurate number.

Step 4: Clustering and assignment

Finally, speaker diarization models take the embeddings (produced above), and cluster them into as many clusters as there are speakers. For example, if a diarization model predicts there are four speakers in an audio file, the embeddings will be forced into four groups based on the "similarity" of the embeddings.

For example, in the below image, let's assume each dot is an utterance. The utterances get clustered together based on their similarity—with the idea being that each cluster is a unique speaker.

There are many ways to determine similarity of embeddings, and this is a core component of accurately predicting speaker labels with a Speaker Diarization model. Recent architectural advances in speaker embedding models have improved clustering accuracy, particularly for short utterances and challenging acoustic conditions.

After this step, you now have a transcription complete with accurate speaker labels!

By default, AssemblyAI's models are optimized to identify up to 10 distinct speakers. For use cases with a known number of participants outside this range, you can use the speaker_options parameter to specify a minimum and maximum number of expected speakers.

Main approaches to speaker diarization

Speaker diarization systems use two fundamentally different technical approaches to solve the "who spoke when" problem. Understanding these methodologies is crucial for selecting the right solution and setting realistic performance expectations.

Pipeline-based (clustering) systems

The traditional approach treats speaker diarization as a multi-stage pipeline, where each component handles a specific task in sequence:

- Voice Activity Detection (VAD): First identifies which parts of the audio contain speech versus silence or background noise

- Segmentation: Divides the speech regions into uniform chunks for processing

- Embedding Extraction: Generates numerical representations (embeddings) that capture unique voice characteristics

- Clustering: Groups similar embeddings together, with each cluster representing a unique speaker

Pipeline-based systems offer key advantages and trade-offs:

- Advantages: Transparent processing, stage-specific optimization, easier debugging

- Disadvantages: Error propagation—mistakes in early stages cascade through the entire pipeline

End-to-end neural systems

Modern end-to-end systems use a single neural network to perform speaker diarization directly. These systems, often built on transformer architectures, learn to map raw audio to speaker-labeled segments without explicit intermediate stages.

Rather than separate models for each task, one unified model learns the entire process. This approach captures complex patterns that pipeline systems might miss.

Recent architectural advances in end-to-end models better handle challenging scenarios:

- Subtle voice changes between speakers

- Overlapping speech patterns

- Brief utterances that challenged older systems

Trade-off: Less interpretability makes debugging more difficult when errors occur.

Hybrid approaches

Some systems combine elements of both approaches. They might use neural networks for embedding extraction but traditional clustering for speaker grouping, or employ end-to-end models for initial predictions followed by rule-based post-processing for refinement.

The choice between approaches often depends on your specific requirements. Pipeline systems work well when you need to understand and control each processing stage. End-to-end systems excel when you prioritize accuracy and have diverse, challenging audio conditions.

Evaluating speaker diarization performance

Diarization Error Rate (DER) measures accuracy as the percentage of incorrectly attributed speech time. This metric is part of a widely used evaluation scheme that has been a staple in diarization studies since the 2006 Rich Transcription (RT) evaluation.

DER calculation includes three error types:

- Speaker confusion: The duration of speech that is assigned to the wrong speaker.

- False alarm speech: The duration where non-speech audio (like silence or background noise) is incorrectly labeled as speech.

- Missed detection: The duration of speech that the system fails to detect entirely.

The total error duration is then divided by the total duration of the audio file to get the final DER percentage. A lower DER indicates higher accuracy. Understanding this metric is key to benchmarking different models and choosing a solution that meets your application's accuracy requirements.

When benchmarking diarization systems, you'll also encounter the Speaker Count Error Rate metric, which measures accuracy in determining the correct number of speakers. AssemblyAI's models achieve a low speaker count error rate, providing reliable speaker identification even in challenging acoustic conditions.

Comparing speaker diarization solutions

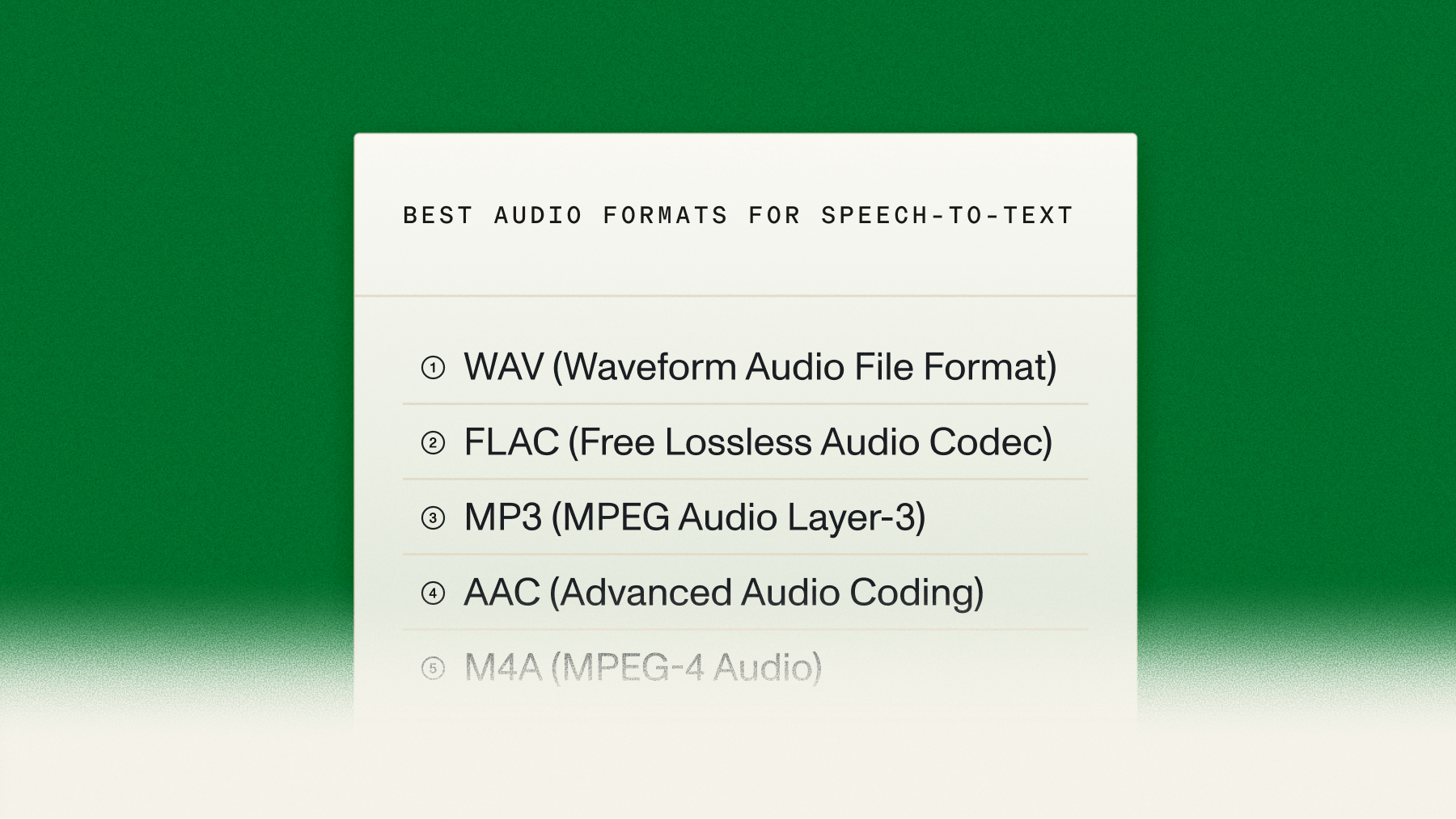

Speaker diarization implementation involves choosing between two primary paths: open-source libraries or commercial APIs. This decision impacts development time, accuracy, maintenance requirements, and total cost of ownership.

Open-source libraries

Open-source diarization tools provide complete control over the implementation. Libraries like pyannote.audio and NVIDIA NeMo offer state-of-the-art models you can customize for specific use cases.

Benefits of open-source:

- Full customization of model architectures and parameters

- No per-usage costs for processing

- Complete data privacy—audio never leaves your infrastructure

- Ability to fine-tune models on domain-specific data

Challenges to consider:

- Significant engineering effort for deployment and maintenance

- Infrastructure costs for GPU servers and scaling

- Ongoing work to incorporate latest research improvements

- Complex optimization for production-level accuracy

Commercial Voice AI APIs

API-based solutions like AssemblyAI provide production-ready speaker diarization through simple API calls. These services handle the complexity of model optimization, infrastructure scaling, and continuous improvements.

Benefits of commercial APIs:

- Immediate access to state-of-the-art models

- No infrastructure or maintenance overhead

- Automatic improvements as new models are released

- Enterprise-grade reliability and support

Considerations for APIs:

- Usage-based pricing model

- Less customization flexibility

- Audio data processed on provider's infrastructure, which is a key consideration, as a recent industry survey found that over 30% of product leaders cite data privacy and security as a significant challenge.

Making the right choice

Decision framework: Use these criteria to evaluate which approach matches your specific requirements:

Many successful products started with APIs to validate their use case, then evaluated building in-house once they understood their specific requirements and scale. This approach minimizes initial investment while maximizing learning.

Industry applications

These applications are already delivering measurable results for organizations across industries. For example, hiring intelligence platform Screenloop uses AI-powered transcription and speaker diarization to help its customers realize a 90% reduction in time spent on manual hiring and interview tasks, 20% reduced time to hire, and improved training effectiveness while reducing hiring bias.

New for speaker diarization

Speaker diarization faces several technical limitations:

Real-time processing: Speaker diarization is fully supported in real-time on single-channel audio streams. By setting the speaker_labels: true parameter in your WebSocket connection, you can receive speaker-labeled transcripts with low latency, making it ideal for live captioning, voice agents, and real-time meeting analysis. This capability is available for all of AssemblyAI's streaming models.

Future outlook: Recent survey data shows 80% of product leaders predict real-time capabilities will be the most transformative development in speech understanding.

There are also several constraints that limit the accuracy of modern models:

- Speaker talk time

- Conversational pace

Minimum speaker requirements:

- 15+ seconds: Unreliable detection, may assign as unknown

- 30+ seconds: Reliable speaker identification

- <15 seconds: Often merged with dominant speakers

Conversational pace significantly impacts diarization accuracy. Well-structured conversations work best for accurate speaker identification:

- Optimal conditions: Clear turn-taking, minimal interruptions, low background noise (like podcast interviews)

- Challenging conditions: Overlapping speech, frequent interruptions, significant background noise

Crosstalk challenges: Overlapping speech significantly reduces accuracy compared to structured dialogues.

- Error rates: Research confirms error rates can exceed 50% in conversational scenarios

- System responses: Less advanced systems may merge speakers, miss overlapping speech, or create imaginary speakers

Different providers have varying speaker limits. AssemblyAI supports up to 10 speakers by default, but you can use the speaker_options parameter to specify a different range for your specific use case, though accuracy may decrease with a higher number of speakers. Research findings confirm this, showing word diarization error rates can jump from 2.68% in two-speaker scenarios to 11.65% with three speakers.

Recent improvements have reduced errors when speakers have similar voices.

Recent improvements: Modern Speech-to-Text APIs are overcoming traditional limitations through advanced research.

Performance gains include:

- 30% accuracy improvement in noisy environments

- Documented improvements across challenging audio conditions

AssemblyAI's new speaker diarization model delivers significant improvements in real-world audio conditions:

- 20.4% error rate in noisy, far-field scenarios (down from 29.1%) - a 30% improvement for challenging acoustic environments where traditional systems fail

- Accurate speaker identification for 250ms segments - enabling tracking of single words and brief acknowledgments

- 57% improvement in mid-length reverberant audio - better performance in conference rooms and large spaces

- Automatic deployment - All customers benefit immediately with no code changes required

These improvements specifically target the challenging scenarios that break existing systems: conference room recordings with ambient noise, multi-speaker discussions with overlapping voices, and remote meetings with poor audio quality. Learn more about implementation options.

Getting started with speaker diarization

Speaker diarization transforms unstructured audio into organized, analyzable data. By accurately identifying who said what, you can power more intelligent features in your applications, from meeting summaries to call center analytics.

The best way to understand the impact of diarization is to test it on your own audio files. You can start building for free with our API to see how our models perform on the real-world audio your application will handle. Try our API for free to get your API key and run your first diarization request in minutes.

Frequently asked questions about speaker diarization

What's the difference between speaker diarization and speaker recognition?

Speaker diarization answers "who spoke when?" by assigning generic labels like "Speaker A" and "Speaker B". Speaker recognition is a broader category of identifying a person from their voice, often requiring pre-enrolled voice profiles. AssemblyAI offers a feature called Speaker Identification that builds on diarization. It uses conversational context to intelligently assign meaningful names or roles (e.g., "John Smith" or "Agent") to the generic speaker labels without needing pre-enrolled voiceprints.

How many speakers can speaker diarization detect?

Most production systems handle 2-10 speakers reliably, with some supporting up to 30+ speakers depending on audio quality and provider capabilities.

Which languages support speaker diarization?

AssemblyAI's Universal model supports speaker diarization across 99+ languages. For the highest accuracy in English, Spanish, Portuguese, French, German, and Italian, our Universal-3-Pro model is recommended.

Can speaker diarization work in real-time?

Yes, real-time speaker diarization is fully supported. Using AssemblyAI's streaming models, you can enable speaker labels for live audio streams to identify who is speaking as the conversation happens. While asynchronous processing can offer the highest possible accuracy by analyzing the entire file, real-time diarization provides excellent performance for live applications.

How accurate is speaker diarization?

Accuracy is measured by Diarization Error Rate (DER), where lower percentages indicate better performance. Leading systems achieve low DERs with 30% relative improvements in challenging conditions.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

.png)