Top APIs and models for real-time speech recognition and transcription in 2026

Compare the best real-time speech recognition APIs and models for 2026. Evaluate latency, accuracy, and integration complexity across cloud APIs and open-source solutions.

This guide compares the leading real-time speech recognition APIs and open-source models available in 2026—a key technology in a voice tech market that market data shows is projected to reach nearly $50 billion by 2029. We evaluate latency, accuracy, language support, and integration complexity so you can make a confident architecture decision before writing a line of code.

Real-time speech recognition converts live audio to text with sub-second latency through persistent WebSocket connections. Developers choose between cloud APIs (300–500ms response times, minimal ops overhead) and open-source models (more control, significantly more engineering effort). We cover both.

What is real-time speech recognition?

A real-time speech recognition API converts live audio into text with sub-second latency by streaming audio chunks over a persistent WebSocket connection—processing speech as it's captured rather than waiting for a complete audio file. This makes it the foundational component for any application that requires immediate transcription: voice agents, live captions, voice commands, and real-time analytics.

For a voice agent to hold a natural conversation, it can't wait for the user to finish speaking before it starts processing—real-time speech recognition is what makes that fluid back-and-forth possible.

How real-time speech recognition works

Real-time speech recognition uses a persistent WebSocket connection between your application and the transcription server.

The process works through four key stages:

The system generates a stream of Turn Events, which include both partial and final transcripts.

- Partial transcripts: These are stable, running segments of the transcript. While more stable than word-by-word partials from older models, they can still be revised in the final transcript as the model gains more context.

- Final transcripts: When the model detects an endpoint (like a pause after a complete sentence), it emits a final, immutable transcript for that turn.

Efficient endpointing—detecting when a speaker is genuinely done versus pausing to think—is what minimizes perceived latency and determines how quickly the system can trigger a downstream response.

Core challenges in real-time speech recognition

Real-time speech recognition has four core technical challenges that should directly shape how you evaluate providers:

- Background Noise: Models must distinguish speech from ambient noise, which can be difficult in real-world environments like call centers or public spaces; in fact, an industry report identifies transcription quality in noisy environments as a primary pain point for teams.

- Accents and Dialects: Performance can vary significantly across different accents, dialects, and languages. Thorough testing with representative audio is crucial.

- Speaker Diarization: Identifying who said what in a multi-speaker conversation is complex in a streaming context, as the model has limited information to differentiate voices.

- Cost Management: Streaming transcription is often priced by the second, so managing WebSocket connections efficiently is important to control costs, especially at scale. As cost analysis shows, the total cost of ownership also includes licensing, implementation, and support.

Real-world use cases

Real-time speech recognition powers applications where immediate feedback determines user experience:

AI voice agents

Natural conversation requires sub-500ms initial response times. Companies build on AssemblyAI's streaming transcription specifically because latency at this threshold is what separates a voice agent that feels natural from one that feels robotic.

AssemblyAI's Voice Agent API handles the full STT → LLM → TTS pipeline in a single WebSocket connection at $4.50/hr—so you're not managing three separate providers to hit that latency target.

Live captioning

Captions can tolerate 1–3 second delays, but lower latency directly improves accessibility in meetings, broadcasts, and live events.

Voice commands

Interactive control systems need responses under one second. Beyond that, the interface feels broken.

Key criteria for selecting a real-time speech recognition API

Choosing the right solution depends on your specific application requirements. Here's what actually matters when evaluating these services:

Latency requirements drive everything

Real-time applications demand different latency thresholds, and this drives your entire architecture. Voice agent applications targeting natural conversation need sub-500ms initial response times to maintain conversational flow. Live captioning can tolerate 1-3 second delays, though users notice anything beyond that.

The 800ms end-to-end budget is the practical ceiling for natural voice-to-voice interaction—that's speech recognition, LLM processing, and TTS synthesis combined. Research shows 95% of people have been frustrated with voice agents, and latency is a primary driver.

A 95% accurate system that responds in 300ms often delivers better user experience than a 98% accurate system that takes 2 seconds, as an analysis of voice agents identifies a 300ms response threshold as the point where conversations start to feel unnatural. Optimize for the latency threshold your use case requires, then maximize accuracy within it.

Accuracy vs. speed trade-offs are real

Independent benchmarks show AssemblyAI and AWS Transcribe lead on real-time accuracy when formatting requirements are relaxed. Applications requiring proper punctuation and capitalization see different rankings—test with formatted output enabled.

Language support complexity

Real-time multilingual support remains technically challenging, especially when handling code-switching (flipping between languages mid-sentence), which research shows is incredibly common in multilingual communities. Most solutions excel in English but show degraded performance in other languages. Marketing claims about "100+ languages supported" rarely translate to production-ready performance across all those languages.

If you're building for global users, test your target languages extensively. Accuracy can drop significantly for non-English languages, particularly with technical vocabulary or regional accents.

Integration complexity matters more than you think

Cloud APIs offer faster time-to-market but introduce external dependencies. Open-source models provide control but require significant engineering resources. Most teams underestimate the engineering effort required to get open-source solutions production-ready, and industry research highlights drawbacks like extensive customization needs, lack of dedicated support, and the burden of managing security and scalability.

Consider your team's expertise and infrastructure constraints when evaluating options. A slightly less accurate cloud API that your team can integrate in days often beats a more accurate open-source model that takes months to deploy reliably.

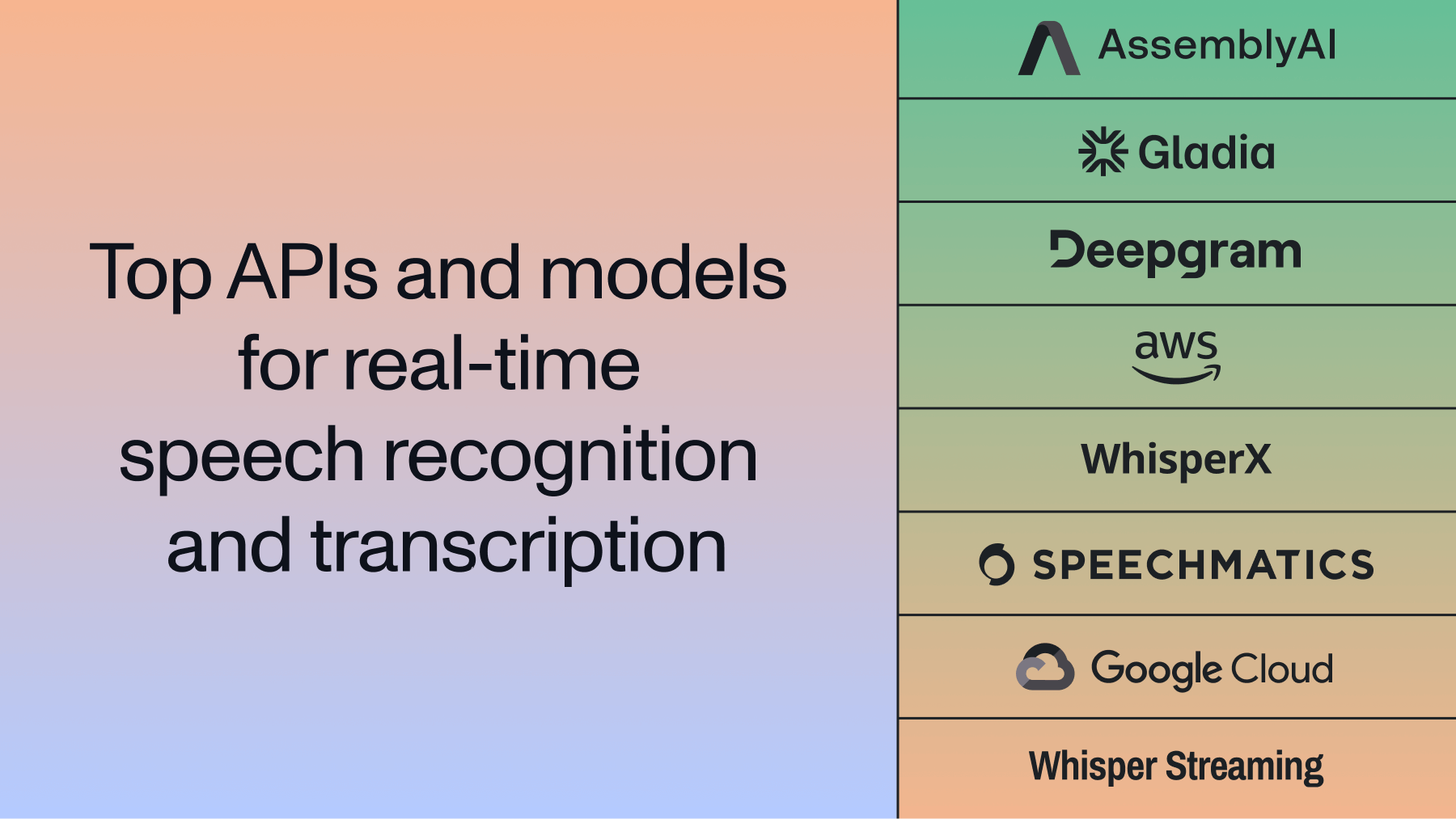

Quick comparison: top real-time speech recognition APIs and models

Here's how the leading solutions stack up across key performance criteria:

How to connect and stream audio to a real-time speech recognition API

Three things break most streaming integrations: wrong audio format, mishandled transcript events, and no reconnection logic. Here's how to get all three right.

Configure your audio stream

Real-time speech recognition models expect raw, uncompressed audio—send MP3 or AAC over a WebSocket and you'll get errors or garbled transcripts. For Universal-3 Pro Streaming, use these specifications:

When capturing audio from a browser using getUserMedia, you'll typically need to downsample the audio from the browser's native rate (often 44.1kHz or 48kHz) to 16kHz before sending it through the WebSocket.

Handle partial and final transcripts

Streaming APIs emit two event types: partial transcripts (the model's running best guess, may change) and final transcripts (immutable—endpoint detected, text locked). Here's a minimal Python implementation using the AssemblyAI SDK:

import assemblyai as aai

aai.settings.api_key = "YOUR_API_KEY"

def on_open(session_opened: aai.RealtimeSessionOpened):

print("WebSocket connection opened")

def on_data(transcript: aai.RealtimeTranscript):

if not transcript.text:

return

if isinstance(transcript, aai.RealtimeFinalTranscript):

print(f"Final: {transcript.text}")

else:

print(f"Partial: {transcript.text}", end="\r")

def on_error(error: aai.RealtimeError):

print(f"Error: {error}")

def on_close():

print("WebSocket connection closed")

transcriber = aai.RealtimeTranscriber(

sample_rate=16000,

on_data=on_data,

on_error=on_error,

on_open=on_open,

on_close=on_close

)

transcriber.connect()

# Stream your PCM16 audio data here

# transcriber.stream(audio_data)

# transcriber.close()Handle errors and reconnections

WebSocket connections drop in production—implement exponential backoff to reconnect without losing conversation context:

import time

def reconnect_with_backoff(transcriber, max_retries=5):

retry_delay = 1

for attempt in range(max_retries):

try:

transcriber.connect()

return True

except Exception as e:

print(f"Reconnection attempt {attempt + 1} failed: {e}")

time.sleep(retry_delay)

retry_delay *= 2 # Exponential backoff

return FalseAssemblyAI's Voice Agent API preserves session context for up to 30 seconds on reconnect—and since the full STT → LLM → TTS pipeline runs over a single WebSocket at $4.50/hr, there's only one connection to recover.

Cloud API solutions

AssemblyAI Universal-3 Pro Streaming

Universal-3 Pro Streaming is AssemblyAI's latest real-time transcription model, purpose-built for voice agent deployments. It delivers ~150ms P50 latency after VAD endpoint detection and achieves a 6.3% mean word error rate across English domains—the lowest among major providers in independent benchmarks.

A support call that starts with account verification and shifts into technical troubleshooting can get accurate transcription at every phase—without restarting the session. Universal-3 Pro Streaming accepts natural-language prompts and up to 1,000 domain-specific key terms, updated turn-by-turn over the same WebSocket connection.

Key capabilities:

- Real-time prompting (beta): Guide transcription behavior with natural-language prompts mid-session—control disfluency output, speaker role labels, formatting, and code-switching between English, Spanish, German, French, Portuguese, and Italian

- Dynamic key term prompting: Boost recognition of up to 100 domain terms (product names, medications, policy IDs) updated on every turn, not just at session start

- Streaming speaker diarization: Track and label speakers inline as audio arrives—no post-processing pipeline required

- Entity accuracy: 16.7% average missed entity rate, outperforming Deepgram Nova-3 (25.2%), OpenAI GPT-4o Transcribe (23.3%), and Microsoft Azure (25.1%) on names, emails, phone numbers, and credit card numbers

- Unlimited concurrency: No rate limits or upfront commitments—scale from one call to millions on pay-as-you-go pricing

- One-line integrations: Native support for LiveKit, Pipecat, Twilio, and Daily

The model also addresses a persistent production pain point: turn detection. Rather than relying solely on silence thresholds, Universal-3 Pro Streaming uses audio-contextual signals—tonality, pacing, and speech patterns—to determine when a speaker is done. This reduces premature cutoffs and hallucinated words that plague other streaming models.

Pricing: $0.45/hr base rate. Add-ons include streaming diarization (+$0.12/hr), keyterm prompting (+$0.04/hr), and real-time prompting (beta, +$0.05/hr).

Best for: Production voice agents that need accurate entity recognition, real-time speaker attribution, and the ability to adapt transcription behavior mid-conversation.

If you're already using AssemblyAI's streaming transcription, upgrading is a one-line change: update the speech_model parameter to u3-rt-pro.

Gladia STT API

Gladia provides a WebSocket-based real-time speech recognition API optimized for low-latency (sub-100 ms for partials) streaming applications. It supports multilingual recognition (100+ languages), with code-switching and configurable endpointing for production voice agents.

Best for: Real-time streaming and multilingual environments with code-switching handling, ensuring accurate speaker separation even when languages shift mid-sentence.

Deepgram Nova-2

Deepgram's Nova-2 model offers real-time capabilities. The platform reports improvements in word error rates and offers some customization options for domain-specific vocabulary.

Best for: Applications requiring specialized domain adaptation where English-only solutions won't work.

OpenAI Realtime API

OpenAI's Realtime API is a multimodal model that handles audio alongside text, images, and video—it's not purpose-built for speech workflows. That means turn detection, entity recognition, and accent handling aren't optimized specifically for conversation quality.

Best for: Teams already in the OpenAI ecosystem who want a single provider and can accept higher cost and lower speech-specific accuracy.

AWS Transcribe

Amazon's Transcribe service provides solid real-time performance within the AWS ecosystem. Pricing starts at $0.024 per minute with extensive language support (100+ languages) and strong enterprise features.

Perfect for applications already using AWS infrastructure or requiring extensive language support. It's not the best at anything specific, but it works reliably and integrates well with other AWS services.

Google Cloud Speech-to-Text

Google's Speech-to-Text API offers broad language support (125+ languages) but consistently ranks last in independent benchmarks for real-time accuracy. The service works adequately for basic transcription needs but struggles with challenging audio conditions.

Choose this for legacy applications or projects requiring Google Cloud integration where accuracy isn't critical. We wouldn't recommend it for new projects unless you're already locked into Google's ecosystem.

Microsoft Azure Speech Services

Azure's Speech Services provides moderate performance with strong integration into Microsoft's ecosystem. The service offers reasonable accuracy and latency for most applications but doesn't excel in any particular area.

Best for organizations heavily invested in Microsoft technologies or requiring specific compliance features. It's a middle-of-the-road option that works without being remarkable.

Open-source models

WhisperX

WhisperX extends OpenAI's Whisper with real-time capabilities, achieving 4x speed improvements over the base model. The solution adds word-level timestamps and speaker diarization while maintaining Whisper's accuracy levels.

Advantages: 4x speed improvement over base Whisper, word-level timestamps and speaker diarization, 99+ language support, and full control over deployment and data.

Challenges: Significant engineering effort for production deployment, variable latency (380-520ms in optimized setups), and limited real-time streaming capabilities without additional engineering. Don't underestimate the engineering effort required, as recent analysis suggests most open-source options require weeks to months of development to become production-ready.

Whisper Streaming

Whisper Streaming variants attempt to create real-time versions of OpenAI's Whisper model, which was originally trained on 680,000 hours of web audio according to the original research. While promising for research applications, production deployment faces significant challenges.

Advantages: Proven Whisper architecture, extensive language support (99+ languages), no API costs beyond infrastructure, and complete control over model and data.

Challenges: 1-5s latency in many implementations, requires extensive engineering work for production optimization, and performance highly dependent on hardware configuration. We wouldn't recommend it for production applications unless you have a dedicated ML engineering team.

Which real-time speech recognition API should you choose?

Here's the honest breakdown by use case:

For full voice agent builds: AssemblyAI's Voice Agent API replaces separate STT, LLM, and TTS providers with a single WebSocket at $4.50/hr. It's built on Universal-3 Pro—lowest word error rate among major providers, #1 on the Hugging Face Open ASR Leaderboard—with turn detection, interruption handling, and entity recognition included.

For streaming transcription only: Universal-3 Pro Streaming delivers ~150ms P50 latency and a 16.7% missed entity rate (lowest among major providers). Unlimited concurrency, no upfront commits, and a 99.95% uptime SLA make it suitable for scaling from pilot to production.

For AWS-integrated applications: AWS Transcribe provides solid performance within the AWS ecosystem, particularly for applications already using other AWS services. It's not the best at anything, but it integrates well.

For self-hosted deployment with engineering resources: WhisperX offers a solid open-source option, providing control over deployment and data while maintaining reasonable accuracy levels. Consider this alongside other free speech recognition options if budget constraints are a primary concern.

For multilingual real-time voice applications: Gladia offers a competitive real-time streaming API with sub-300ms latency and 100+ language support. It's particularly well-suited for meeting assistants and customer support agents handling multiple languages.

Proof-of-concept testing methodology

Before committing to a solution, test with your specific use case. Most developers skip this step and regret it later:

- Evaluate with representative audio samples that match your application's conditions

- Test latency under expected load to ensure performance scales

- Measure accuracy with domain-specific terminology relevant to your application

- Assess integration complexity with your existing technology stack

- Validate pricing models against projected usage patterns

Don't trust benchmarks alone. Test with your actual use case. Performance varies significantly based on audio quality, speaker characteristics, and domain-specific terminology.

Ready to implement real-time speech recognition? Explore these step-by-step voice agent examples or try AssemblyAI's real-time transcription API free.

Frequently asked questions about real-time speech recognition APIs

What audio format does a real-time speech recognition API expect, and how do I configure it correctly?

Real-time speech recognition APIs expect 16-bit PCM audio at 16 kHz mono—compressed formats like MP3 or AAC will cause errors. If you're capturing from a browser, downsample from the native rate (44.1 kHz or 48 kHz) to 16 kHz before streaming.

How do I handle WebSocket reconnections without losing transcript context?

Implement exponential backoff for automatic reconnection attempts. AssemblyAI preserves session context for up to 30 seconds—reconnect within that window and the conversation continues without the user repeating themselves.

How do I manage partial transcripts to avoid displaying unstable text in my UI?

Keep two separate text buffers: one for committed final transcripts (immutable, appended in order) and one for the active partial (overwritten on every interim event). When a final transcript arrives, move it to the committed buffer and clear the active one.

How do I reduce end-to-end latency in a voice agent pipeline?

The fastest path is using a unified pipeline like AssemblyAI's Voice Agent API, which eliminates inter-provider hops with a single WebSocket at ~1 second end-to-end. If building your own stack, reduce audio chunk sizes to 100–250ms, use edge servers, and stream LLM output directly into your TTS provider.

How do I evaluate transcription accuracy before committing to a provider?

Record 10–15 minutes of audio that matches your actual production conditions—background noise, accents, and domain-specific terms—then run it through each candidate API and calculate Word Error Rate (WER). Pay particular attention to entity accuracy (names, phone numbers, account numbers), since WER alone won't surface those failures.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.