Build voice AI apps with LLM Gateway

Learn how LLM Gateway connects speech recognition with LLMs to build intelligent applications. Explore real-world use cases, pricing, and implementation strategies.

The explosion of audio content has created an unprecedented opportunity for businesses. The global voice and speech recognition market is projected to reach $53.67 billion by 2030, yet most organizations still struggle to extract meaningful intelligence from their conversations, meetings, and calls.

Here's the challenge: traditional speech-to-text gives you transcripts, but transcripts alone don't drive business decisions. You need intelligence—summaries that capture key points, answers to specific questions, and structured data that integrates with your existing systems.

This is where Large Language Models (LLMs) transform speech applications from simple transcription tools into intelligent business platforms. And for real-time use cases, voice agents are enabling entirely new categories of human-AI interaction over voice.

In this guide, we'll explore how Voice AI apps are revolutionizing business operations, examine the categories driving real ROI, and show you how to build production-ready applications using AssemblyAI's Speech-to-Text API, LLM Gateway, and Voice Agent API.

Voice AI applications transforming business operations

Voice AI apps are software applications that combine speech recognition, Large Language Models, and speech synthesis to understand conversations, extract business insights, and automate workflows. Some operate asynchronously—processing recorded audio to generate meeting summaries or analyze sales calls. Others operate in real time as voice agents, conducting live conversations with customers, scheduling appointments, or qualifying leads.

The transformation is happening across every industry—76% of teams now embed conversation intelligence in more than half of their customer interactions. Healthcare organizations like PatientNotes.app are automating clinical documentation. Legal firms including JusticeText are revolutionizing how they process depositions and court proceedings.

Voice AI apps deliver measurable business impact through automation. Organizations report 40-60% productivity improvements in audio-intensive workflows, with ROI typically achieved within 2-3 months.

Key business transformations include:

- Real-time intelligence: Customer service teams spot issues before escalation

- Data-driven coaching: Sales managers coach based on actual conversation patterns

- Scalable feedback analysis: Product teams understand user feedback at enterprise scale

- Automated voice interactions: Voice agents handle routine calls—appointment scheduling, lead qualification, and tier-1 support—around the clock

Categories of Voice AI apps driving ROI

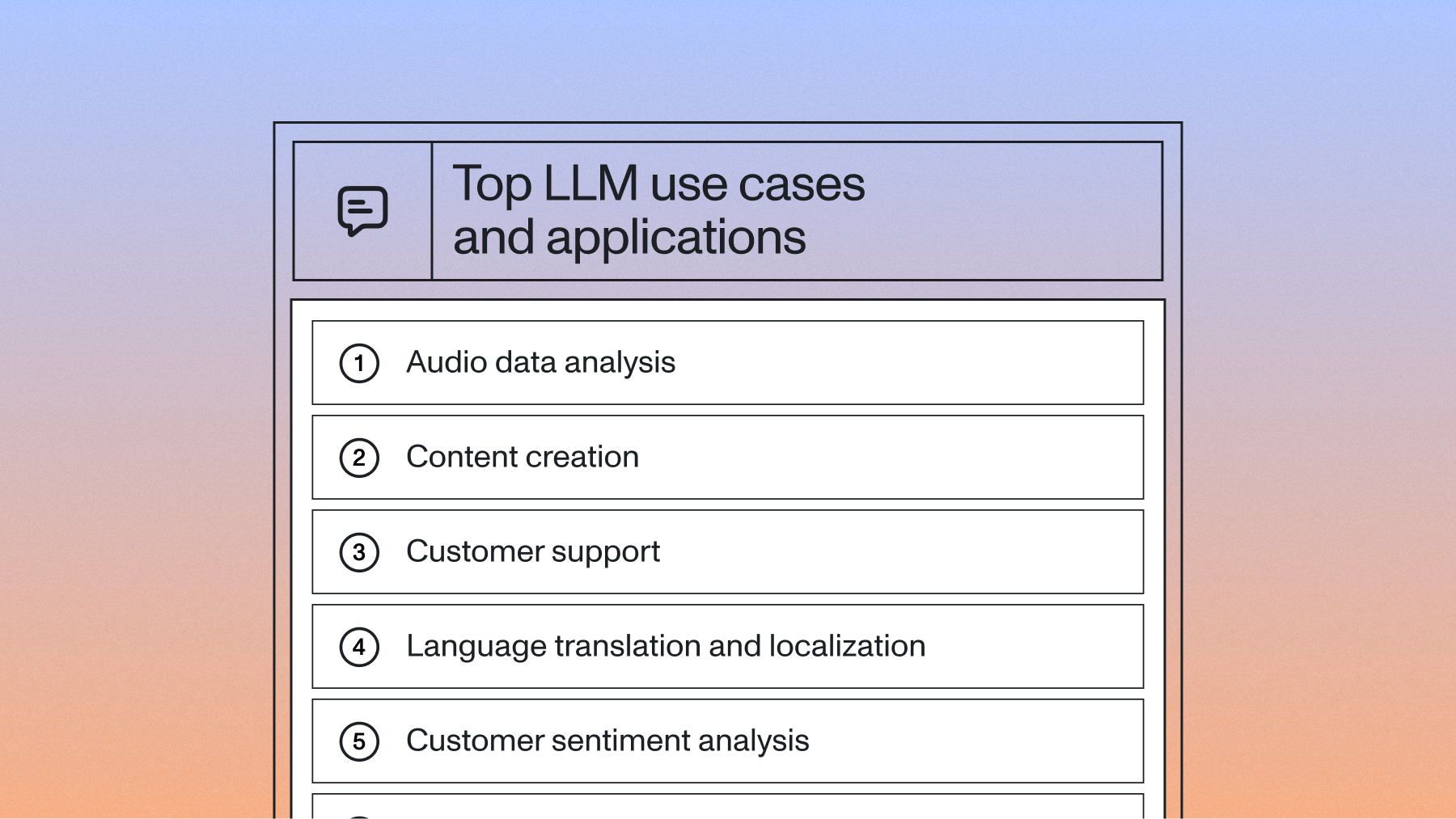

While the possibilities for Voice AI are vast, the most successful implementations fall into several key categories, each addressing specific business challenges with measurable impact.

Meeting intelligence platforms

Companies like Circleback AI and Supernormal are transforming meeting workflows with 65% reduction in follow-up time and 30% increase in action item completion rates.

Business impact includes:

- Improved accountability: Automatic action item tracking and assignment

- Faster decision-making: Instantly searchable discussion points

- Enhanced knowledge transfer: Organizational memory of all meetings

Sales intelligence solutions

Sales intelligence represents one of the fastest-growing Voice AI categories. Platforms built by companies like Clari analyze every customer interaction to provide coaching insights, track deal progression, and identify at-risk opportunities.

These applications excel at pattern recognition. They identify which talk tracks lead to successful outcomes, which objections frequently derail deals, and which competitive mentions require immediate attention. Sales leaders gain visibility that was previously impossible—understanding not just what deals are closing, but why.

Customer service automation

Contact centers are experiencing a Voice AI revolution. Companies like CallSource and Observe.ai are deploying Voice AI to automatically categorize support tickets, identify customer sentiment, and surface quality assurance insights.

The transformation goes beyond operational efficiency. Voice AI enables proactive service—identifying frustrated customers before they churn, spotting product issues before they become widespread, and ensuring consistent service quality across thousands of agents.

Healthcare documentation systems

Healthcare providers face enormous documentation burdens. Voice AI applications from companies like PatientNotes.app and clinical documentation platforms are automating the creation of SOAP notes, extracting diagnostic codes, and ensuring comprehensive record-keeping without sacrificing patient interaction time.

Real-time voice agents

A newer category is emerging: real-time voice agents that conduct live, two-way conversations over voice. These aren't the robotic IVR menus of the past—they're AI-powered agents that listen, reason, and respond naturally.

Use cases gaining traction include:

- Customer service agents: Handle inbound support calls 24/7, resolving common issues without human intervention

- Appointment scheduling: Book, reschedule, and confirm appointments through natural conversation

- Lead qualification: Engage inbound leads in real time, ask qualifying questions, and route high-intent prospects to sales reps

- Technical support: Walk callers through troubleshooting steps, collect diagnostic information, and escalate when needed

What separates this wave from earlier voice automation is the accuracy of the underlying speech recognition and the reasoning capability of LLMs. In a survey of 450+ voice agent builders, 76% cited speech-to-text accuracy as a non-negotiable requirement—ahead of cost, latency, and every other factor. When a voice agent can correctly hear a caller's account number, understand a nuanced request, and respond in context, the experience feels genuinely conversational—not scripted.

How Voice AI apps work: architecture and components

Voice AI applications follow two primary architecture patterns, each suited to different use cases. Understanding these patterns helps you choose the right approach and avoid over-engineering your solution.

Cascade architecture: STT → LLM → TTS

The cascade architecture chains three discrete components in sequence: a speech-to-text model converts audio to text, an LLM processes that text and generates a response, and a text-to-speech model converts the response back to audio.

This is the dominant pattern for both asynchronous and real-time Voice AI apps. For asynchronous workflows—like post-call analytics or meeting summarization—you only need the first two stages (STT → LLM). For real-time voice agents, you add the TTS stage to speak the response back to the user.

The cascade approach gives you granular control. You can swap individual components, inspect the transcript between stages, and engineer prompts based on exactly what was said. The tradeoff is latency—each stage adds time, and for real-time agents, the total pipeline latency determines how natural the conversation feels.

Speech-to-speech architecture

Speech-to-speech models take a different approach: a single multimodal model processes audio input and generates audio output directly, without an intermediate text stage. OpenAI's GPT-4o in voice mode is the most prominent example.

The potential advantage is lower latency and the ability to capture vocal nuances (tone, emphasis, hesitation) that text transcription loses. But in practice, these models come with significant tradeoffs: less control over the pipeline, limited ability to inspect or log the intermediate reasoning, and accuracy limitations for precise tasks like capturing names, numbers, and domain-specific terminology.

Choosing the right architecture

For most production Voice AI apps today, the cascade architecture is the practical choice. It gives you better speech accuracy (critical for anything involving names, account numbers, or medical terms), full observability into what the model heard and how it responded, and the flexibility to choose best-in-class components at each stage.

Speech-to-speech models are worth watching, but they're still early for production use cases where accuracy and control matter more than raw latency.

AssemblyAI's platform supports the cascade approach across both asynchronous and real-time use cases—Speech-to-Text API and LLM Gateway for async workflows, and Voice Agent API for real-time voice agents.

Building Voice AI apps with AssemblyAI

AssemblyAI provides three core products that cover the full spectrum of Voice AI use cases, from batch audio processing to live conversational agents.

Speech-to-text API

The foundation of any Voice AI app is accurate transcription. AssemblyAI's Speech-to-Text API offers both pre-recorded and streaming transcription built on Universal-3 Pro—the most accurate speech-to-text model available. Features like speaker diarization, entity detection, and sentiment analysis are built directly into the transcription pipeline, so you don't need separate NLP services downstream.

LLM Gateway: applying LLMs to spoken data

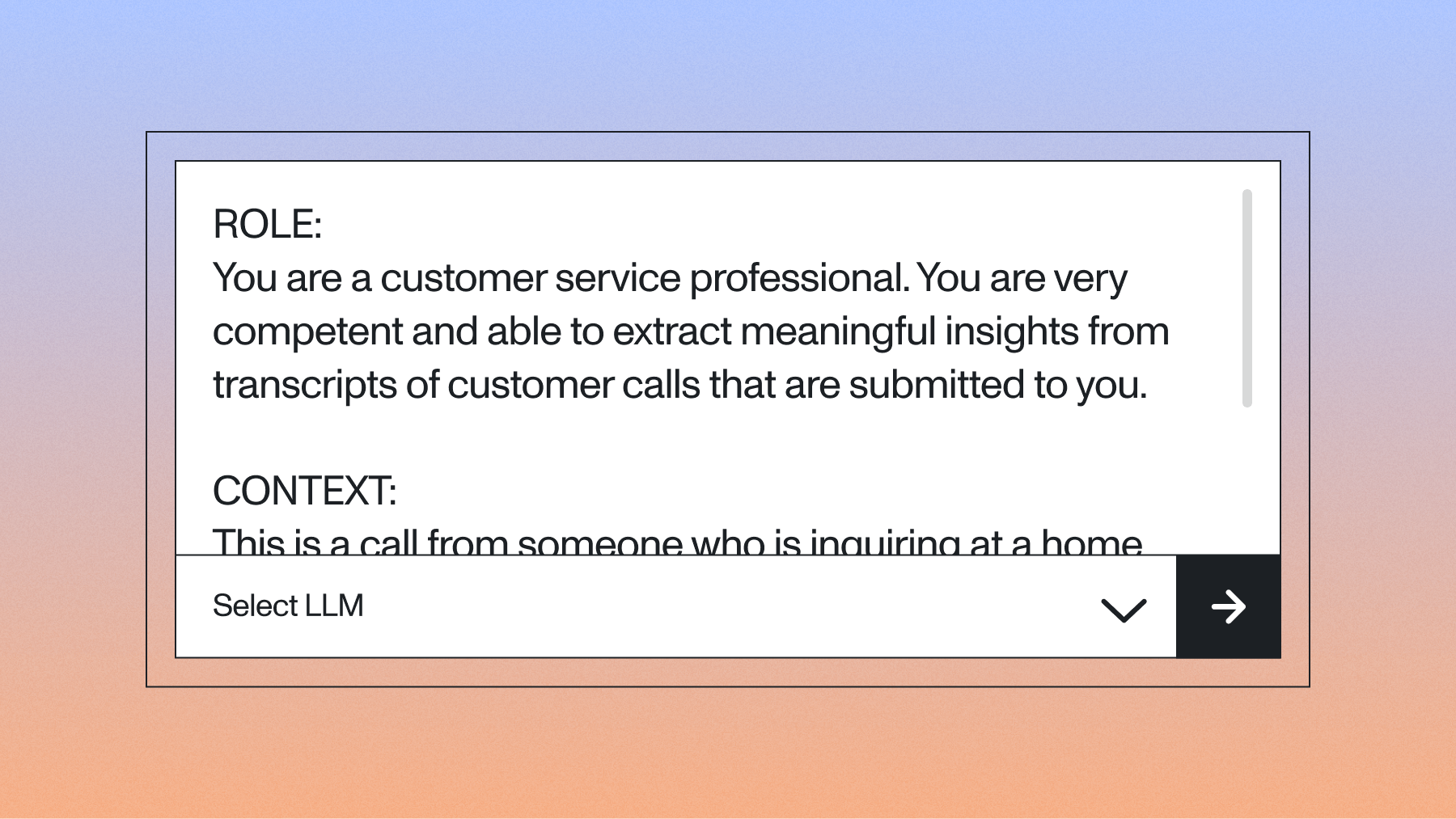

AssemblyAI's LLM Gateway is a framework for applying Large Language Models to spoken data. It provides a unified API to access 25+ models from providers like Anthropic, OpenAI, and Google, reducing the complexity of building intelligent Voice AI applications.

The typical workflow involves two main steps: first, transcribing your audio to get an accurate text transcript, and second, using that transcript with the LLM Gateway to generate insights.

Application architecture flow:

Audio Input → Speech-to-Text API (/v2/transcript) → Transcript Text

→ LLM Gateway (/v1/chat/completions) → Structured Output

↓ ↓ ↓

↓ ↓

Raw Audio → Accurate Transcript → Your Prompt

→ LLM Analysis → Your ApplicationThis modular approach gives you full control over the process. You can preprocess the transcript, inject custom context, and engineer prompts precisely for your use case before sending the data to your chosen LLM. This lets your team focus on building features that differentiate your product rather than managing multiple complex LLM integrations.

What makes LLM Gateway particularly powerful is its flexibility. You can apply sophisticated reasoning to transcripts of any length by managing the context you provide to the model, enabling you to extract business-relevant insights from even the longest and most complex conversations.

Voice Agent API: real-time voice interactions

For real-time use cases, the Voice Agent API provides a single WebSocket connection that handles the full STT → LLM → TTS pipeline. Instead of stitching together three separate providers—each with its own authentication, billing, and debugging surface—you open one WebSocket, stream audio in, and get audio back.

The API is built on Universal-3 Pro Streaming for speech recognition, which means the voice agent correctly captures names, account numbers, email addresses, and domain-specific terminology that other models frequently get wrong. When the STT is accurate, the LLM responds to what the caller actually said—not a garbled approximation.

Key capabilities developers get out of the box:

- Turn detection and interruption handling: Configurable VAD settings so the agent knows when a caller is pausing versus done speaking, and handles barge-in naturally

- Tool calling: Register custom tools via JSON Schema so the agent can look up order status, book appointments, or query your backend during a live conversation

- Live configuration updates: Change the system prompt, tools, or settings mid-conversation without reconnecting

- Flat-rate pricing: $4.50/hour covers STT, LLM, and TTS—one bill instead of three

The developer experience is intentionally simple: a standard JSON API with no SDK required. Most developers have a working voice agent running within an afternoon. Drop-in plugins for LiveKit and Pipecat are available for teams already using those frameworks.

Core Voice AI capabilities

AssemblyAI provides a suite of capabilities that address the most common speech intelligence use cases. These are available through the Speech-to-Text API and LLM Gateway, offering a flexible way to solve business problems across industries.

Summarization

The Summarization feature, part of the speech understanding models, transforms lengthy audio content into concise, actionable insights. Unlike generic text summarization, it's designed to understand conversation dynamics. You can enable it with a single parameter in your transcription request.

A 90-minute board meeting becomes a structured summary highlighting decisions made, action items assigned, and key discussion points—formatted specifically for executive review. Sales teams use this to quickly understand prospect calls without listening to entire recordings.

The business impact is immediate: executives report saving hours weekly on meeting follow-ups, while sales teams can review significantly more prospect interactions in the same timeframe.

Question and answer

LLM Gateway lets you ask specific questions about your audio transcripts and receive precise, contextual answers. This goes far beyond keyword search. After transcribing your audio, you pass the transcript text to the LLM Gateway with a question.

Customer success teams use this to quickly identify why clients are churning by asking questions like "What concerns did the customer raise about our pricing?" or "How did the customer respond to our retention offer?" The model analyzes the conversation context to provide accurate, actionable answers.

Legal teams use Q&A to review depositions and client meetings, asking targeted questions about specific topics without manually reviewing hours of recordings.

Custom prompts and data extraction

Custom prompts with LLM Gateway provide the ultimate flexibility to extract any specific information from your audio content. This lets you program the LLM to understand your unique business context and return precisely formatted results, such as JSON.

Healthcare organizations use custom prompts to extract structured medical information from patient consultations for electronic health records. Financial services firms extract compliance-related information from advisor-client meetings, ensuring regulatory requirements are consistently met. This capability also enables powerful call data extraction that integrates directly with business systems like CRMs or project management tools.

The key advantage is customization without complexity—you define what you need in natural language, and LLM Gateway handles the implementation.

Enterprise implementations with proven results

The combination of these capabilities creates powerful applications across multiple industries. Leading organizations are implementing Voice AI solutions that deliver measurable business value.

Meeting intelligence applications

Meeting intelligence platforms like Otter.ai and Fireflies.ai demonstrate the market demand for Voice AI applications. These platforms process millions of meetings monthly, providing summarization, action item tracking, and searchable conversation archives. Companies including Mem and Circleback AI are building on similar technology to deliver enhanced meeting intelligence.

Organizations implementing meeting intelligence solutions report substantial productivity gains: dramatic reduction in meeting follow-up time, improved project coordination, and increased action item completion rates.

The key success factor is customization—generic meeting summaries provide limited value, but summaries formatted for specific business processes drive measurable outcomes.

Sales intelligence applications

Sales intelligence platforms represent one of the fastest-growing applications of Voice AI technology. Platforms like Gong and Chorus.ai have demonstrated how conversation analysis can dramatically improve sales performance.

AssemblyAI's APIs enable similar capabilities for organizations building internal sales intelligence tools:

- Deal analysis: Automatically extract competitor mentions, pricing discussions, and decision-making criteria from sales calls

- Coaching insights: Identify successful conversation patterns and areas for improvement across sales teams

- Pipeline intelligence: Track deal progression signals and risk factors mentioned during prospect conversations

Sales teams using conversation intelligence report higher close rates and shorter sales cycles. The technology delivers rapid return on investment through improved deal conversion alone. Companies like Salesroom and UpdateAI are using these capabilities to transform sales performance.

Customer service solutions

Customer service represents the largest opportunity for Voice AI applications, with contact centers processing billions of interactions annually. LLM Gateway enables several high-impact use cases:

Call center implementations use LLM Gateway to automatically categorize support tickets, identify escalation triggers, and extract customer satisfaction indicators. This reduces manual quality assurance workload substantially while improving consistency in issue identification.

Support automation examples include automatically routing calls based on conversation content, generating follow-up summaries for customer records, and identifying training opportunities for support agents.

The business case is compelling: contact centers can achieve significant cost savings through improved efficiency and reduced quality assurance costs. Organizations like Observe.ai and CallSource demonstrate the transformative potential of Voice AI in customer service.

Implementation strategies and best practices

Successfully launching a Voice AI app requires strategic planning beyond technical implementation. Organizations that achieve the best results follow proven patterns that balance ambition with practical constraints.

Start with high-impact use cases

The most successful Voice AI implementations begin with narrowly defined, high-value problems. Instead of attempting to revolutionize entire workflows immediately, focus on specific pain points where automation delivers immediate value.

Consider starting with use cases that have clear success metrics: reducing time spent on meeting notes, improving sales call review efficiency, or automating specific types of customer inquiries. These focused implementations build organizational confidence and provide learnings for broader deployment.

Design for your workflow

Voice AI apps deliver maximum value when integrated seamlessly into existing workflows. This means understanding not just what information to extract, but where it needs to go and how it will be used.

Map your current processes before implementing Voice AI. Identify where manual transcription or review creates bottlenecks. Design your application to deliver insights directly into the tools your teams already use—whether that's Salesforce, Slack, or specialized industry platforms.

Iterate based on user feedback

Voice AI applications improve dramatically through iteration. Start with baseline functionality, gather user feedback, and refine your prompts and processing based on real-world usage.

Pay particular attention to edge cases that emerge during deployment. A sales intelligence app might initially miss certain objection patterns. Build feedback loops that capture these insights and drive continuous improvement.

Scale gradually

Successful Voice AI deployment follows a deliberate scaling pattern:

- Pilot phase: Test with a small group of power users who can provide detailed feedback

- Department rollout: Expand to a full team or department to validate at moderate scale

- Cross-functional deployment: Extend to related teams that can benefit from similar functionality

- Enterprise scale: Full deployment with established best practices and support structures

This graduated approach ensures technical infrastructure scales appropriately while giving teams time to adapt to new workflows and capabilities.

Build production Voice AI apps with AssemblyAI

Speech recognition combined with Large Language Models transforms how organizations extract value from audio content. AssemblyAI's platform—Speech-to-Text API, LLM Gateway, and Voice Agent API—eliminates many of the traditional barriers to building Voice AI applications: technical complexity, multi-vendor integration headaches, and ongoing maintenance requirements.

The business case is clear: organizations implementing Voice AI solutions report substantial improvements in productivity for audio-intensive workflows, with measurable returns typically achieved within months. More importantly, these applications enable new business capabilities that weren't previously feasible—from real-time voice agents that handle customer calls around the clock to intelligent analytics that surface insights from every conversation.

Whether you're building meeting intelligence tools, sales analytics platforms, customer service automation, or real-time voice agents, AssemblyAI provides the foundation for transforming speech data into competitive advantage.

Leading companies across industries trust AssemblyAI for their Voice AI implementations. From healthcare innovators like PatientNotes.app to sales intelligence leaders like Clari, organizations choose AssemblyAI for industry-leading accuracy, scalable infrastructure, and comprehensive developer support.

Frequently asked questions about Voice AI apps

What is a Voice AI app and how does it work?

A Voice AI app combines speech recognition, Large Language Models, and sometimes speech synthesis to extract intelligence from audio or conduct real-time voice conversations. Asynchronous apps transcribe audio and apply LLMs to generate summaries, answers, or structured data; real-time voice agents handle live two-way conversations by chaining STT, LLM reasoning, and TTS in a continuous loop.

How do I build a real-time voice AI agent?

You can build a real-time voice agent by connecting a speech-to-text model, an LLM, and a text-to-speech model in a streaming pipeline—or use AssemblyAI's Voice Agent API, which provides a single WebSocket connection that handles all three stages. The Voice Agent API lets you define a system prompt, register tools, and stream audio in and out without managing multiple providers.

What's the difference between LLM Gateway and Voice Agent API?

LLM Gateway is for asynchronous workflows—you transcribe audio first, then send the transcript text to an LLM for analysis, summarization, or data extraction. Voice Agent API is for real-time use cases where a live caller is speaking to an AI agent; it handles streaming STT, LLM reasoning, and TTS in a single connection with sub-second latency.

Which industries benefit most from Voice AI applications?

Healthcare (clinical documentation, ambient scribes), sales (conversation intelligence, deal analytics), customer service (contact center automation, quality assurance), and legal (deposition analysis, case preparation) see the most immediate ROI. Any industry with high volumes of spoken interactions is a strong candidate.

How do you measure ROI for Voice AI implementations?

Track metrics specific to your use case: time saved on manual transcription or review, improvement in action item completion rates, agent productivity gains, close rate changes for sales teams, or reduction in quality assurance costs. Most organizations see measurable returns within 2-3 months of deployment.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.