Real-time vs batch transcription: What's the difference?

When building Voice AI applications, you'll face a fundamental choice between real-time and batch transcription—two distinct approaches that serve different needs. Learn the difference.

When building Voice AI applications, you'll face a fundamental choice between real-time and batch transcription—two distinct approaches that serve different needs in a rapidly expanding field where market data shows the Speech Recognition market is expected to grow at a CAGR of 16.3% from 2023 to 2030. Real-time transcription converts speech-to-text instantly as audio streams in, enabling live interactions like voice assistants and meeting captions. Batch transcription processes complete audio files after recording, prioritizing accuracy over speed for archived content and detailed analysis.

Understanding when to use each approach directly impacts your application's user experience and backend architecture. This guide covers how each method works, the infrastructure required to scale them, the use cases each serves best, and a decision framework for choosing between them—or combining both.

What is real-time transcription?

Real-time transcription is the instant conversion of live audio into text as speech occurs—delivering results within milliseconds rather than waiting for a recording to finish. The system processes audio in continuous chunks, returning partial and then final transcriptions as each segment completes. Unlike batch transcription, it works without access to future context, which means it trades some accuracy for speed.

You'll encounter real-time transcription in voice assistants, live captions during video calls, and meeting platforms that show notes as participants speak. The system analyzes audio in tiny chunks—usually lasting just a fraction of a second—and returns text immediately.

Modern real-time systems have evolved dramatically from early voice recognition technology. Where older systems required careful pronunciation and struggled with natural speech, current AI models handle conversational patterns, multiple speakers, and even interruptions.

Key capabilities include:

- WebSocket streaming: Persistent connections for instant audio and text transmission

- Voice Activity Detection: Automatic identification of when speech starts and stops

- Speaker separation: Diarization via multichannel audio, with a separate streaming session per speaker channel

- Progressive refinement: Partial results that improve as more context arrives

The technology has become essential for accessibility, enabling deaf and hard-of-hearing individuals to participate in live events.

What is batch transcription?

Batch transcription processes complete audio files after recording, analyzing entire conversations before generating final transcripts. This means the system waits until you upload a finished recording, then takes time—anywhere from seconds to hours—to produce results.

The workflow is straightforward: upload your audio file, wait in a processing queue, then receive a complete transcript. The system analyzes your entire recording with full context, making multiple passes to refine understanding.

Batch processing excels at handling challenging audio that confuses real-time systems. It distinguishes between similar-sounding words by understanding complete sentence structure, accurately identifies speakers even during interruptions, and applies advanced formatting like proper punctuation.

The approach prioritizes accuracy over speed. Since the system has access to your complete conversation, it can resolve ambiguities that would trip up real-time processing. If someone says "there" early in a sentence, batch processing can determine whether they meant "there," "their," or "they're" by analyzing the complete context.

Benefits include:

- Maximum accuracy: Full context analysis for optimal word recognition

- Advanced features: Automatic chapters, summaries, and topic detection

- Format flexibility: Support for dozens of audio formats and codecs

- Cost efficiency: Lower per-minute processing costs for large volumes

Real-time vs batch transcription: Key differences

Real-time transcription

Processes audio as it streams in, returning text within milliseconds. Optimizes for speed and interactivity at the cost of full contextual access.

Batch transcription

Processes a complete audio file after recording, returning a single final transcript. Optimizes for accuracy using full bidirectional context.

The differences extend beyond timing. Each approach makes a fundamental trade-off: real-time systems make immediate decisions with incomplete information, while batch systems analyze the entire conversation before committing to any interpretation.

Technical requirements differ significantly too. Real-time transcription needs persistent WebSocket connections and streaming infrastructure to handle concurrent sessions. Batch transcription uses simple request-response patterns that work with standard REST APIs.

The choice often comes down to user expectations. If people interact with your system live, they expect immediate responses even if occasionally imperfect. If they're reviewing content later, they prefer maximum accuracy regardless of processing time.

How does real-time transcription work?

Real-time transcription follows a continuous pipeline that starts the moment audio enters your microphone or streaming platform. The system captures raw audio, converts it to digital format, and immediately begins processing without waiting for silence or conversation breaks.

Audio streams through persistent connections—think of them as always-open channels between your device and the transcription service. The most common protocol is WebSockets, which allows simultaneous audio upload and text download.

The speech recognition model processes each audio segment while maintaining memory of previous segments. This streaming approach means the model makes predictions with incomplete information, occasionally updating its output as new audio provides clearer context.

Streaming protocols and audio processing

WebSocket connections form the backbone of real-time transcription, providing full-duplex communication that handles audio going up and text coming down simultaneously. Your audio gets divided into chunks lasting 100-250 milliseconds—small enough for low delay but large enough to capture meaningful speech patterns.

Each chunk passes through Voice Activity Detection to separate actual speech from silence or background noise. This preprocessing step prevents the system from trying to transcribe air conditioning hums or keyboard clicks.

Real-time noise reduction runs continuously, filtering ambient sounds before the speech recognition model processes the audio. This filtering is crucial for maintaining accuracy in challenging environments like busy offices or outdoor locations.

The system maintains audio buffers to handle network variations. If your internet connection stutters momentarily, the buffer prevents gaps in transcription while the connection stabilizes.

Latency and accuracy considerations

Latency in real-time transcription comes from multiple sources that each add milliseconds to total delay, with some industry guides reporting typical latencies between 200-500 milliseconds. Network transmission typically adds 50-200ms depending on your distance from the processing servers.

The speech recognition model itself requires 100-300ms for processing. More sophisticated models trade slightly higher latency for better accuracy—a worthwhile exchange for most applications.

Modern streaming models achieve impressive accuracy on clear audio, approaching the quality of batch transcription. However, challenging conditions like heavy accents or significant background noise can reduce accuracy since the system lacks future context to resolve ambiguous phrases.

How does batch transcription work?

Batch transcription relies on standard REST APIs and asynchronous processing—no persistent connections required. Here's what happens from file submission to final transcript:

- File submission: You upload an audio or video file directly, or provide a publicly accessible URL via REST API call.

- Audio normalization: The system converts your file into a standardized format optimized for the speech recognition model—applying advanced noise reduction and audio leveling without real-time constraints.

- Full-context transcription: The AI models process the entire file at once, using bidirectional context to resolve ambiguous words and phrases. This is why batch models like Universal-3 Pro achieve higher accuracy than streaming models on challenging audio.

- Speech understanding: With the full transcript available, additional models run speaker diarization, generate summaries, detect entities, and apply proper punctuation and casing.

- Webhook delivery: The completed transcript is returned as a JSON response, typically triggered by a webhook callback your application listens for.

The core advantage of batch processing is bidirectional context: the AI models analyze the entire audio file at once, so when they encounter an ambiguous word, they look at both what came before and what follows to determine the correct transcription. This is why batch models like Universal-3 Pro achieve higher accuracy than streaming models, particularly with complex terminology or heavy accents. For broad language needs, Universal-2 provides reliable transcription across 99 languages, while Universal-3 Pro delivers state-of-the-art accuracy for English and other supported languages. The streaming model never has access to future audio—the batch model always does.

Infrastructure and scaling considerations

Your choice between real-time and batch transcription fundamentally shapes your backend infrastructure. Scaling these two approaches requires entirely different engineering strategies.

Scaling real-time infrastructure

Real-time transcription requires maintaining persistent WebSocket connections. If you're building voice agents or live captioning tools, your infrastructure must handle concurrent, long-lived connections without dropping audio packets.

This means implementing robust connection management, handling network jitter, and managing state across distributed systems. Load balancing WebSockets is inherently more complex than balancing standard HTTP requests because connections are stateful. If a server goes down, the client must immediately reconnect and re-establish the audio stream.

For voice agents, you also need to orchestrate the full pipeline—speech recognition, LLM reasoning, and voice generation—while keeping end-to-end latency under one second. AssemblyAI's Voice Agent API replaces that multi-provider complexity with a single WebSocket connection: one API, one bill, one set of logs, built on Universal-3 Pro Streaming for industry-leading speech accuracy and low latency. It's invisible infrastructure—your users feel like you built the whole thing yourself.

Scaling batch infrastructure

Batch transcription infrastructure is comparatively straightforward. It relies on stateless REST APIs and asynchronous webhooks. When you need to process thousands of hours of audio, you simply submit the files and wait for a webhook callback when the processing is complete.

Scaling batch workloads is primarily about managing concurrency limits and handling webhook payloads—not dropped packets or microsecond latency. Your architecture focuses on reliable file storage, database updates upon webhook receipt, and retry logic for failed uploads. That simplicity makes batch transcription highly resilient for high-volume, asynchronous processing.

When to use real-time vs batch transcription

The right choice comes down to one core question: do your users need a response during the conversation, or is the transcript consumed after the audio is recorded?

- Choose real-time transcription when your application requires immediate feedback—voice agents, live captions, or in-call coaching tools where a delayed transcript is useless.

- Choose batch transcription when your application processes completed recordings—post-call analytics, media transcription, legal documentation, or research where accuracy outweighs speed.

- Consider a hybrid approach when you need both: real-time for live interaction, batch for the archival record.

Real-time transcription use cases

Voice agents and conversational AI require sub-second response times to maintain natural conversation flow. When you're building customer service bots or interactive voice systems, real-time transcription enables the immediate understanding necessary for contextual responses.

The slight accuracy trade-off becomes acceptable because users can clarify misunderstandings through continued conversation. A voice agent that responds quickly but occasionally mishears a word provides better experience than one that's perfectly accurate but slow.

Live captioning for accessibility serves audiences during video calls, broadcasts, and live events. Immediate caption display—even if occasionally imperfect—provides far more value than delayed but perfect transcriptions.

Modern streaming models achieve sufficient accuracy for viewers to follow conversations naturally. The key is getting captions on screen fast enough to match speech rhythm.

Real-time collaboration transforms how teams work together. Meeting participants see transcribed notes appear instantly, can search previous discussion points during conversations, and receive AI-generated action items before meetings end.

Sales teams particularly benefit from real-time transcription for live coaching, with managers providing guidance during customer calls based on conversation flow. This use case highlights a major trend, as a 2025 industry survey found that over 80% of respondents predict real-time capabilities will be the most transformative in conversation intelligence.

Batch transcription use cases

Content creation and archival benefits from batch transcription's superior accuracy. Podcast producers, video creators, and media companies need precise transcripts for SEO, accessibility compliance, and content repurposing.

The extra processing time becomes irrelevant since transcripts are prepared before publication. Perfect accuracy matters more than speed for content that will be searched, quoted, and redistributed.

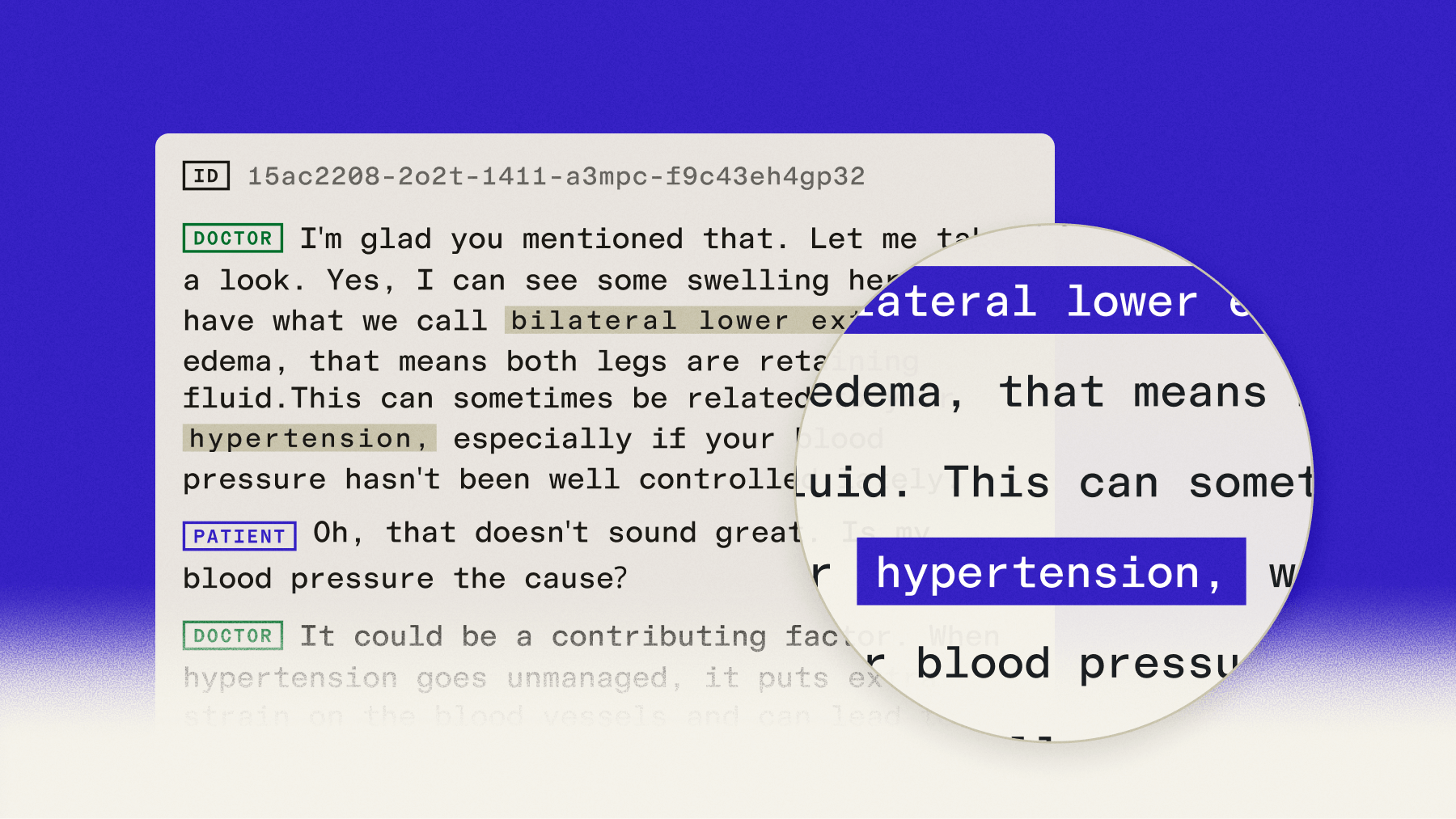

Legal and medical documentation demands the highest possible accuracy, as errors could have serious consequences. For this reason, as an industry guide confirms, most ambient AI scribes use batch transcription to prioritize accuracy, which is essential when medical jargon is involved. Court reporters, medical transcriptionists, and compliance officers rely on batch processing to ensure every word gets captured correctly.

Research and analysis applications process interview recordings, focus groups, and qualitative research data. Researchers need accurate transcripts they can code, analyze, and cite in publications.

Batch transcription's ability to generate formatted documents with timestamps and speaker labels streamlines research workflows. The system can identify themes, extract quotes, and organize content automatically.

Choose the right transcription approach for your application

Start by evaluating your core requirements. If users need immediate responses or real-time feedback, streaming transcription becomes essential regardless of other factors.

For processing recorded content later, batch transcription's accuracy advantages usually outweigh longer processing times. The decision framework becomes clearer when you consider user expectations and technical constraints.

Decision criteria:

- Need results under 2 seconds: Choose real-time transcription

- Require maximum accuracy: Choose batch transcription

- Users interact live: Choose real-time transcription

- Processing recorded content: Choose batch transcription

- High volume, cost-sensitive: Evaluate both based on specific pricing

Consider hybrid approaches for complex applications. Many platforms use real-time transcription during live sessions for immediate functionality, then run batch transcription afterward for archival accuracy.

This combination provides optimal user experience—instant interactivity plus maximum accuracy for permanent records. You get the best of both worlds without forcing users to choose between speed and quality.

Modern Voice AI platforms offer both streaming and batch APIs with consistent interfaces. You can implement both approaches using similar code structures, making it easier to choose the right method for each use case.

Final words

Real-time transcription enables immediate interactive experiences—voice agents, live captioning, real-time collaboration—while batch transcription maximizes accuracy for recorded content, detailed analysis, and archival purposes. The right choice depends on whether users need instant results or whether you can wait for complete-file accuracy.

AssemblyAI provides both approaches through a unified API. For real-time use cases, Universal-3 Pro Streaming delivers sub-second responses and high accuracy. For batch transcription, Universal-3 Pro offers state-of-the-art accuracy on supported languages by analyzing full-file context, while Universal-2 provides reliable transcription across 99 languages. Try our API for free and test both modes against your audio.

Frequently asked questions about real-time vs batch transcription

How much accuracy do I sacrifice with real-time transcription?

On clear audio, a streaming model like Universal-3 Pro Streaming can achieve accuracy close to its batch counterpart, Universal-3 Pro. However, in challenging conditions—heavy background noise, thick accents, overlapping speech—batch transcription still performs better because it uses the full recording context to resolve ambiguity. This reflects a known issue, as industry analysis shows that transcription quality in noisy environments is a primary pain point for teams implementing the technology.

Can I use both approaches in the same application?

Yes. A common hybrid architecture uses real-time transcription to power live interactions—like displaying captions during a meeting—and then runs batch transcription on the recorded audio afterward to generate a highly accurate, permanent transcript with advanced speech understanding features.

What is the latency difference between the two methods?

Real-time transcription processes audio in milliseconds, typically delivering text in 300-800ms. Batch transcription processes complete files after they are recorded, taking anywhere from a few seconds to several minutes depending on the file length and server load.

Is real-time transcription more expensive to implement?

While the per-minute API costs are often similar, real-time transcription requires more engineering resources to implement and maintain. As technical guides note, the core challenge of real-time involves managing persistent WebSocket connections, handling network instability, and orchestrating low-latency pipelines, which generally results in higher infrastructure and development costs compared to simple REST API file uploads.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.