Text Summarization for NLP: 5 Best APIs, AI Models, and AI Summarizers in 2026

In this article, we’ll discuss what exactly text summarization is, how it works, and a few of the best Text Summarization APIs, AI models, and AI summarizers.

Text summarization has become essential for processing the overwhelming amount of text data generated daily, as industry data indicates the use of virtual meetings alone increased from 48% to 77% between 2000 and 2022. From research papers and legal documents to customer conversations and meeting transcripts, Natural Language Processing (NLP) makes it possible to automatically condense lengthy texts into their most important points, transforming hours of reading into minutes of insight.

In Natural Language Processing, Text Summarization models automatically shorten documents, papers, podcasts, videos, and more into their most important soundbites. These models are powered by advanced AI research that continues to push the boundaries of what's possible with automated text processing.

Product teams are integrating Text Summarization APIs and AI Summarization models into their AI-powered platforms to create summarization tools that automatically summarize calls, interviews, law documents, and more. These are sometimes referred to as AI summarizers.

This article provides a comprehensive overview of text summarization for NLP, covering the fundamental concepts, different approaches and evaluation methods, practical implementation options through APIs, and real-world applications. Whether you're a developer looking to implement summarization or a technical leader evaluating solutions, you'll learn how to choose and apply the right text summarization approach for your needs.

What is text summarization for NLP?

Text summarization for NLP automatically converts long documents, conversations, and media into concise summaries using AI models. These systems extract key information from any text source—research papers, meeting transcripts, or audio/video content—and deliver actionable insights in seconds when combined with speech-to-text APIs.

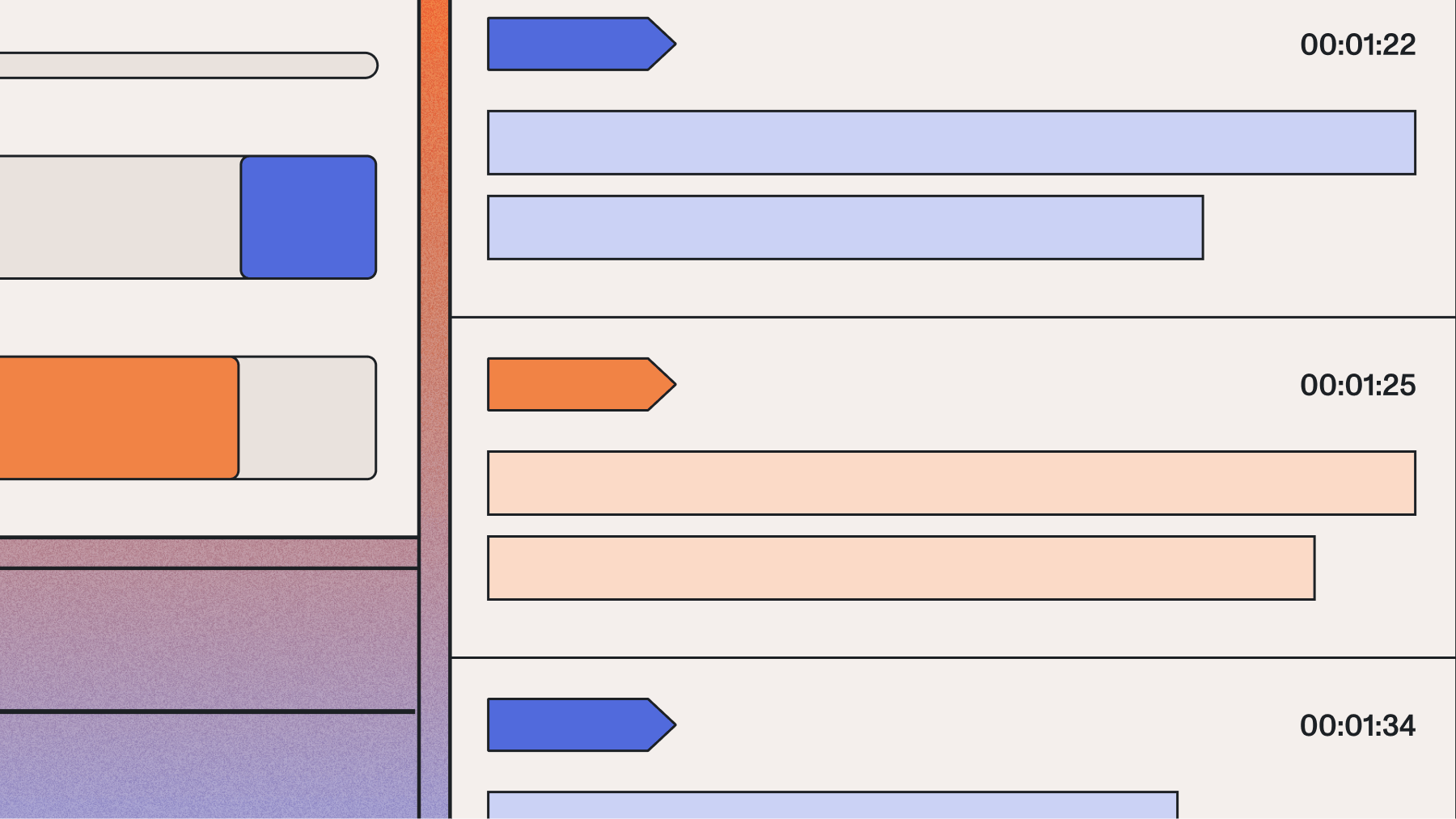

Some Text Summarization APIs provide a single summary for a text, regardless of length, while others break the summary down into shorter time stamps.

Say, for example, you wanted to summarize the 2021 State of the Union Address–an hour and 43-minute long video.

Using a Text Summarization API with time stamps like Auto Chapters, you might be able to generate the following summaries for key sections of the video:

1:45: I have the high privilege and distinct honor to present to you the President of the United States.<br>31:42: 90% of Americans now live within 5 miles of a vaccination site.<br>44:28: The American job plan is going to create millions of good paying jobs.<br>47:59: No one working 40 hours a week should live below the poverty line.<br>48:22: American jobs finally be the biggest increase in non defense research and development.<br>49:21: The National Institute of Health, the NIH, should create a similar advanced research Projects agency for Health.<br>50:31: It would have a singular purpose to develop breakthroughs to prevent, detect and treat diseases like Alzheimer's, diabetes and cancer.<br>51:29: I wanted to lay out before the Congress my plan.<br>52:19: When this nation made twelve years of public education universal in the last century, it made us the best educated, best prepared nation in the world.<br>54:25: The American Family's Plan guarantees four additional years of public education for every person in America, starting as early as we can.<br>57:08: American Family's Plan will provide access to quality, affordable childcare.<br>61:58: I will not impose any tax increase on people making less than $400,000.<br>67:34: He said the U.S. will become an Arsenal for vaccines for other countries.<br>74:12: After 20 years of value, Valor and sacrifice, it's time to bring those troops home.<br>76:01: We have to come together to heal the soul of this nation.<br>80:02: Gun violence has become an epidemic in America.<br>84:23: If you believe we need to secure the border, pass it.<br>85:00: Congress needs to pass legislation this year to finally secure protection for dreamers.<br>87:02: If we want to restore the soul of America, we need to protect the right to vote.

Additionally, other summarization models can break long audio, video, or text inputs into more succinct summaries, such as the bullets, paragraph, and gist examples.

Bullets

- Josh Seiden and Brian Donohue discuss the topic of outcome versus output on Inside Intercom. Josh Seiden is a product consultant and author who has just released a book called Outcomes Over Output. Brian is product management director and he's looking forward to the chat.

- The main premise of the book is that by defining outcomes precisely, it's possible to apply this idea of outcomes in our work. It's in contrast to a really broad and undefined definition of the word "outcome".

- Paul, one of the design managers at Intercom, was struggling to differentiate between customer outcomes and business impact. In Lean Startup, teams focus on what they can change in their behavior to make their customers more satisfied. They focus on the business impact instead of on the customer outcomes. They have a hypothesis and they test their hypothesis with an experiment. They don't have to be 100% certain, but they need to have a hunch. There is a difference between problem-focused and outcome-focused approaches to building and prioritizing projects. For example, a company is working on improving the inbox search feature in their product. They hope it will improve user retention and improve the business impact of the change.

- Product teams need to focus on the outcome of their work rather than on the business impact of their product. They need to be more aware of the customer experience and their relationship with their business.

- As a business owner, you have to build a theory of how the business works. The more you know about your business as your business goes on, the more you can build a business model. The business model is reflected in roadmaps and prioritizations.

- Josh's book is available on Amazon, in print, in ebook and in audiobook on Audible.com. Brian's advice for teams looking to change their way of working is to start small and to use retrospectives and improve your process as you try to implement this. Josh and Brian enjoyed their conversation.

Paragraph

Josh Seiden and Brian Donohue discuss the topic of outcome versus output on Inside Intercom. Josh Seiden is a product consultant and author who has just released a book called Outcomes Over Output. Brian is product management director and he's looking forward to the chat.

Headline

Josh Seiden and Brian Donohue discuss the topic of outcomes versus output on Inside Intercom.

Gist

Outcomes over output

Types of text summarization

Text summarization systems fall into distinct categories based on three key factors:

Approach methodology

Extractive methods pull existing sentences vs. abstractive methods generate new text

Input source type

Single documents vs. multiple document synthesis

Output format

Headlines, paragraphs, bullet points, or ultra-brief gists

Extractive vs. abstractive approaches

Categorization by input source

Summarization systems also differ based on what they're designed to process:

- Single-document summarization condenses one text at a time—perfect for summarizing individual articles, transcripts, or reports.

- Multi-document summarization synthesizes information from several related texts into a unified summary. This approach excels at creating overviews from multiple news articles or research papers on the same topic.

Categorization by output format

Different use cases require different summary formats, and modern systems can generate multiple types:

How does text summarization work?

A litany of text summarization methods have been developed over the last several decades, so answering how text summarization works doesn't have a single answer. In fact, academic research shows the study of automatic text summarization began as early as 1958. This having been said, these methods can be classified according to their general approaches in addressing the challenge of text summarization.

Text summarization methods fall into two main categories: Extractive and Abstractive. Extractive methods extract the most pertinent information from a text, while abstractive methods generate novel text that accurately summarizes the original. Despite their relative complexity, abstractive methods produce much more flexible and arguably faithful summaries, especially in the age of Large Language Models. However, this flexibility comes with risks, as some research suggests nearly 30% of summaries from abstractive systems may contain factual errors.

Extractive text summarization methods

As mentioned above, Extractive Text Summarization methods work by identifying and extracting the salient information in a text. The variety of Extractive methods therefore constitutes different ways of determining what information is important (and therefore should be extracted).

For example frequency-based methods will tend to rank the sentences in a text in order of importance by how frequently different words are used. For each sentence, there exists a weighting term for each word in the vocabulary, where the weight is usually a function of the importance of the word itself and the frequency with which the word appears throughout the document as a whole. Using these weights, the importance of each sentence can then be determined and returned.

Graph-based methods cast textual documents in the language of mathematical graphs. In this schema, each sentence is represented as a node, where nodes are connected if the sentences are deemed to be similar. What constitutes "similar" is, again, a choice of different specific algorithms and approaches. For example, one implementation might use a threshold on the cosine similarity between TF-IDF vectors. In general, the sentences that are globally the "most similar" to all other sentences (i.e. those with the highest centrality) in the document are considered to have the most summarizing information, and are therefore extracted and put into the summary. A notable example of a graph-based method is TextRank, a version of Google's pagerank algorithm (which determines what results to display in Google Search) that has been adapted for summarization (instead ranking the most important sentences). Graph-based methods may benefit in the future from advances in Graph Neural Networks.

Abstractive text summarization methods

Abstractive methods seek to generate a novel summary that appropriately summarizes the information within a text. While there are linguistic approaches to Abstractive Text Summarization, AI models (casting summarization as a seq2seq problem) have proven extremely powerful on this front over the past several years, with research dating back to 2015 first applying deep learning to the task. The invention of the Transformer has therefore had a profound impact in the area of Abstractive Text Summarization, as it did to so many other areas.

More recently, Large Language Models in particular have been applied to the problem of text summarization. The observation of Emergent Abilities in LLMs has proven that LLMs are capable agents across a wide variety of tasks, summarization included. That is, while LLMs are not trained directly for the task of summarization, they become competent general Generative AI models as they scale, leading to the ability to perform summarization along with many other tasks.

More recently, LLM-based summarization-specific approaches have been explored, using pre-trained LLMs with Reinforcement Learning from Human Feedback (RLHF), the core technique which evolved GPT into ChatGPT (e.g. here and here). This schema follows the canonical RLHF training approach, in which human feedback is used to train a reward model, which is then used to update an RL policy via PPO. In short, RLHF leads to an improved, more easily prompted model that tailors its output to human expectations (in this case, human expectations for what a "good" summary is).

The field of text summarization is still an ongoing field of research, and there are natural extensions that can be explored in light of the work that has already been done. For example, we might consider using Reinforcement Learning from AI Feedback (RLAIF) instead of RLHF, as recent research demonstrates that RLAIF can achieve comparable performance to RLHF on tasks that include summarization.

Key terminology

Extractive summarization

Identifies and extracts the most important sentences from source text

Abstractive summarization

Generates novel sentences that capture the essence of source material

ROUGE metrics

Measures word overlap between generated and reference summaries

LLM gateway

Framework for applying Large Language Models to transcript data

Text summarization evaluation methods

Summary quality evaluation uses both automated metrics and human judgment.

Key evaluation metrics:

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation): Measures word overlap between generated and reference summaries

- BLEU (Bilingual Evaluation Understudy): Compares n-gram matches with human-written references

- Human evaluation: Ultimate test for coherence and accuracy

These automated metrics provide benchmarking capability but don't capture semantic meaning perfectly. Supporting this, a recent scoping review of LLM studies found that over half used a hybrid approach, making the most reliable evaluation one that combines automated scores with human review.

Real-world applications and use cases

Text summarization powers critical workflows across industries, transforming voice and text data into actionable insights.

Key implementation benefits:

- Scalable analysis — Process 100% of conversations vs. random sampling

- Real-time insights — Immediate alerts on trends and issues

- Cross-functional value — Shared intelligence across departments

Media and content creation

Video editing platforms and podcasting tools use summarization to automatically generate chapters, show notes, and descriptions. This makes content more discoverable and engaging while saving creators hours of manual work. Platforms can automatically create timestamped summaries that viewers use to jump to relevant sections, improving engagement and watch time.

Meeting and call analysis

Companies use summarization to create concise meeting recaps and call summaries, eliminating the need for manual note-taking. These systems capture key decisions, action items, and discussion points automatically. Teams no longer miss critical information from meetings they couldn't attend, and follow-up becomes more efficient when everyone has access to accurate summaries.

Conversation intelligence

Revenue and contact center platforms summarize sales and support calls to identify key moments and track performance. Supervisors can quickly review dozens of calls by reading summaries instead of listening to hours of recordings. The technology flags important moments like competitor mentions, pricing discussions, or customer objections for deeper review.

Document processing

Legal firms, research organizations, and financial institutions use summarization to speed up document review, a use case validated by specialized research datasets like BillSum, which contains over 23,000 U.S. Congressional bills and their summaries. Instead of reading hundreds of pages, analysts can quickly understand the key points of contracts, research papers, or regulatory filings. This accelerates decision-making and ensures important details aren't overlooked in large document sets.

Healthcare documentation

Medical practices use summarization to transform lengthy patient encounters into concise clinical notes. Doctors can focus on patient care while AI handles documentation, reducing administrative burden and improving accuracy. For instance, one case study involving a behavioral health AI scribe saw a 90% reduction in documentation time for clinicians. Summarization also helps with patient handoffs, ensuring critical information is communicated clearly between care teams.

Choosing the right text summarization approach

Choosing the right summarization approach depends on your specific requirements. When evaluating vendors, research on AI integration shows that product teams prioritize cost (64%), quality and performance (58%), and accuracy (47%) above other factors.

- Extractive summarization works best for:

- High factual accuracy needs (legal documents, compliance)

- Quick identification of key passages

- Cases requiring traceability to source text

- Abstractive summarization excels when:

- Natural, readable summaries are priority

- Processing conversational content (meetings, calls)

- Generating novel content formats (headlines, social posts)

For many product teams, the flexibility of abstractive methods is worth the added complexity, especially with the power of modern AI models.

Best APIs for text summarization

Now that we've discussed what Text Summarization for NLP is and how it works, we'll compare some of the best Text Summarization APIs, AI summarizers, and AI Summarization models to utilize today. Note that some of these APIs support Text Summarization for pre-existing bodies of text, like a research paper, while others perform Text Summarization on top of audio or video stream transcriptions, like from a podcast or virtual meeting.

Quick comparison of top text summarization APIs:

1. AssemblyAI's summarization models

AssemblyAI is a Voice AI company building new AI systems that can understand and process human speech. The company's AI models for Summarization achieve state-of-the-art results on audio and video. In addition, AssemblyAI has additional Summarization models built for specific industry use cases, including informative, conversational, and catchy. Summaries can be returned as bullets, gist, paragraph, or headline (see example above).

For maximum flexibility, LLM gateway (AssemblyAI's framework for Large Language Models) is the recommended way for product teams to generate summaries in any custom format by prompting an LLM with the transcript data.

In addition, AssemblyAI offers the Auto Chapters feature, which segments audio into chapters and provides a time-stamped one-paragraph summary and single-sentence headline for each.

AssemblyAI's AI models are used by top product teams in podcasts, telephony, virtual meeting platforms, conversational intelligence AI platforms, and more. The company's highly accurate transcription models, like Universal, are trained on millions of hours of audio data, which makes summaries generated from its transcriptions even more accurate and useful.

2. Microsoft Azure text summarization

As part of its Azure AI Language service, Azure's Text Summarization API offers extractive summarization for articles, papers, or documents. Requirements to get started include an Azure subscription and the Visual Studio IDE. Pricing to use the API is pay-as-you-go, though prices vary depending on usage and other desired features.

3. NLP Cloud summarization API

NLP Cloud offers several text understanding and NLP APIs, including Text Summarization, in addition to supporting fine-tuning and deploying of community AI models to boost accuracy further. Developers can also build their own custom models and train and deploy them into production. Pricing ranges from $0-$499/month, depending on usage.

Implementation considerations for production systems

Production deployment requires planning around three critical factors: input quality, user experience, and scale.

Success depends on addressing technical constraints upfront to prevent costly redesigns later.

Input quality and preprocessing

The quality of your summary is directly tied to the quality of your input text. As industry research confirms, 'If the words are wrong, the outcomes are too.' For audio and video content, this means starting with a highly accurate transcript. Transcription errors cascade through the summarization pipeline—if your speech-to-text model mishears "quarterly revenue" as "courtly revenue," your summary becomes nonsensical.

Consider preprocessing requirements for your specific content type. Documents may need formatting cleanup, while conversation transcripts might require speaker diarization to maintain context. Some content benefits from noise reduction or normalization before processing.

Latency and user experience design

Different applications have different speed requirements. Real-time applications like live meeting assistants need summaries within seconds, while batch processing for document archives can run overnight. Your choice impacts both technical architecture and user interface design.

Think about the user journey: Does your application need real-time partial summaries as content streams in, or can users wait for complete processing? How will you handle long documents that take time to process? Consider implementing progress indicators and partial results to maintain engagement during processing.

Scale and infrastructure planning

Production systems must handle variable loads gracefully. Your summarization workflow should scale from processing a few documents to thousands without failing. This means planning for:

- Peak load handling during high-traffic periods

- Queue management for batch processing

- Fallback strategies when services are unavailable

- Cost optimization at different usage tiers

Using scalable API infrastructure lets you focus on your application logic rather than managing AI model deployment and scaling challenges.

Output customization and formatting

Production applications often need summaries in specific formats that match their use cases. A customer service platform might need bullet points with timestamps, while a research tool requires paragraph summaries with source citations. Consider how you'll:

- Customize summary length based on content type

- Maintain consistent formatting across different inputs

- Handle edge cases like very short or very long content

- Integrate summaries into existing workflows and UIs

Text summarization tutorials

Want to try Text Summarization yourself? This video tutorial walks you through how to apply Text Summarization to podcasts.

Getting started with text summarization

Text summarization transforms how we process information from documents, audio, and video files. It reveals insights that would otherwise stay buried in raw data.

Getting started doesn't require deep NLP expertise. Production-ready APIs handle the complexity, letting you focus on your application logic. You can try our API for free to experiment with summarization on your own files and start building immediately.

Additional Resources:

- How to use AI to automatically summarize meeting transcripts

- 3 easy ways to add AI Summarization to Conversation Intelligence tools

- How to use Speech to Text AI for Ad Targeting & Brand Protection

- How to use Voice AI systems for podcast hosting, editing, and monetization

- Build standout call coaching features with AI Summarization

Frequently asked questions about text summarization

How does extractive text summarization differ from abstractive summarization in practice?

Extractive summarization selects and combines existing sentences from the original text, like highlighting key passages. Abstractive summarization generates entirely new text that paraphrases the main ideas in different words.

What are common misconceptions about text summarization accuracy?

A common misconception is that summaries are always factually perfect. Abstractive summaries can generate plausible but incorrect information (hallucination), with research showing nearly 30% of AI-generated summaries don't match source facts.

When should you use text summarization vs other NLP techniques?

Use text summarization to reduce document length while preserving meaning. Choose sentiment analysis for emotion detection or named entity recognition for extracting specific data points.

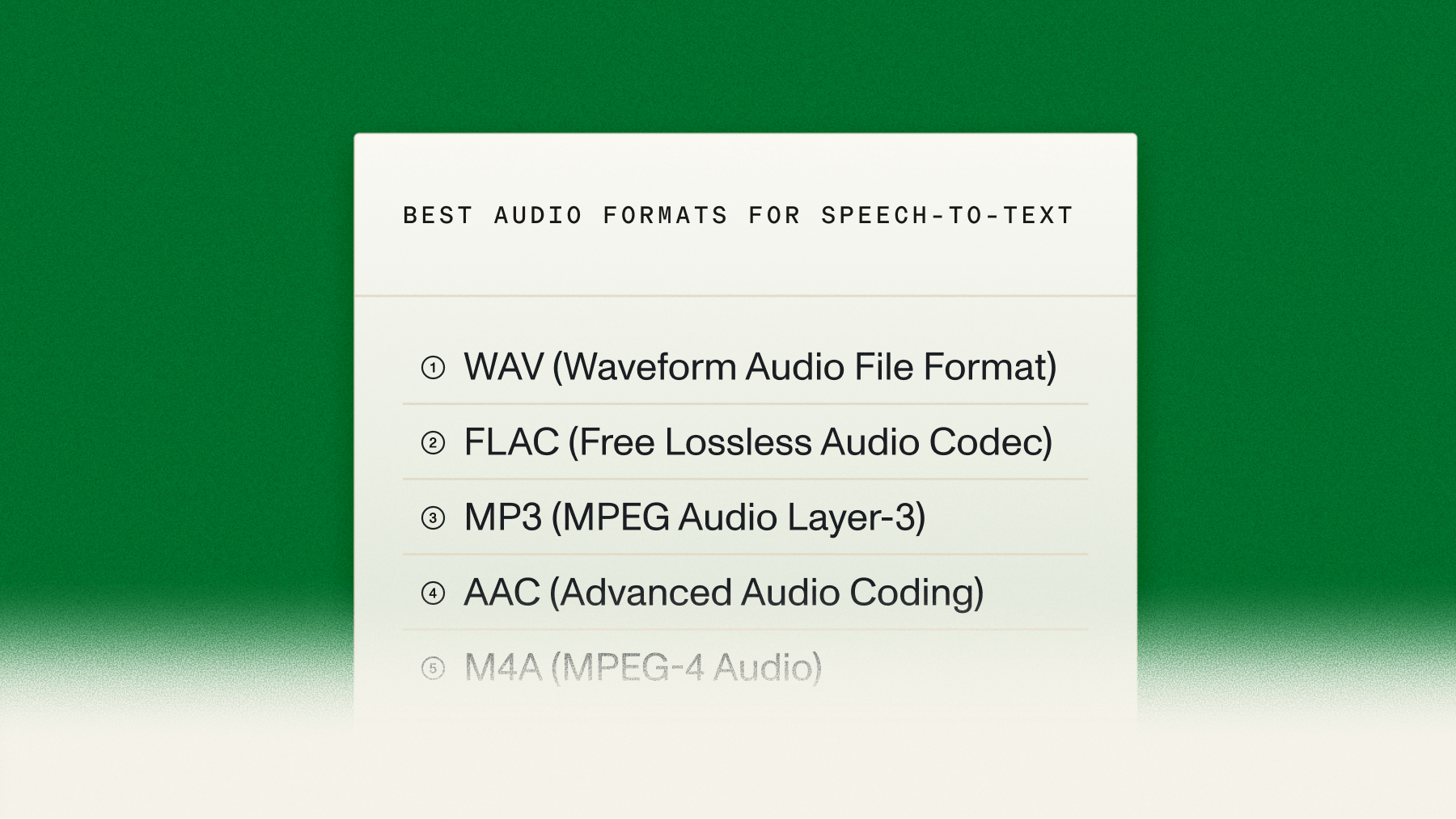

How does text summarization relate to speech-to-text and audio processing?

Text summarization is a critical layer on top of speech-to-text. First, a speech-to-text model transcribes audio into a written transcript, then a text summarization model processes that transcript to create a concise summary.

What prerequisites are needed to implement text summarization effectively?

Clean input text is essential—transcription errors from poor speech-to-text models will cascade into unreliable summaries.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.